The relentless pursuit of artificial intelligence has driven an insatiable demand for computational power, pushing GPUs to their absolute limits and often leaving significant utilization untapped. While massive language models (LLMs) have captured headlines with their impressive capabilities, they also represent a considerable hurdle in terms of resource requirements. Deploying these behemoths typically necessitates reliance on cloud infrastructure, introducing inherent risks related to data security and vendor lock-in. The sheer computational cost associated with training and inference for LLMs is increasingly unsustainable, hindering broader accessibility and innovation within the AI community.

Current strategies aimed at mitigating these challenges often involve model distillation or quantization techniques, but these approaches frequently sacrifice performance or introduce complexities in implementation. Furthermore, achieving optimal efficiency on GPU hardware requires careful consideration of architecture and algorithm design – a process where meticulous CUDA optimization becomes absolutely critical for maximizing throughput and minimizing latency.

Small Language Models (SLMs) are emerging as a compelling alternative, offering the promise of comparable performance with drastically reduced computational footprints. These models can be deployed locally or on edge devices, bypassing cloud dependencies and lowering operational expenses. However, even SLMs can benefit from further enhancement to unlock their full potential and compete effectively with larger counterparts.

Introducing ReGraphT: a novel framework designed specifically to boost the performance of SLMs. This innovative approach rethinks model architecture at a granular level, enabling significant improvements in efficiency and speed without compromising accuracy. We’ll explore how ReGraphT provides a pathway toward truly accessible and powerful AI solutions.

The GPU Optimization Bottleneck

The pursuit of ever-increasing computational power has led us to rely heavily on GPUs, but unlocking their full potential remains a persistent challenge. While NVIDIA’s CUDA platform revolutionized parallel processing, writing truly optimized CUDA code is far from straightforward. It’s not simply about porting sequential algorithms; it requires deep understanding of GPU architecture – thread hierarchies, memory access patterns, shared memory utilization, and warp scheduling – to avoid performance bottlenecks. Existing tools and libraries offer assistance, but they often fall short, leaving developers wrestling with complex optimization issues that can significantly impact application speed.

The core difficulty stems from the inherent complexities of parallel programming itself. Unlike sequential code where execution is linear and predictable, parallel code involves managing thousands or even millions of threads concurrently. Subtle inefficiencies – like poorly aligned memory accesses or thread divergence (where threads within a warp take different execution paths) – can cascade into substantial performance degradation. Debugging these issues is notoriously difficult, often requiring specialized profiling tools and a profound understanding of low-level hardware behavior. Without careful optimization, GPUs are left underutilized, failing to deliver on their promise of massive speedups.

The crucial need for CUDA optimization isn’t just about squeezing out marginal gains; it’s fundamentally tied to the advancement of PC hardware overall. As CPUs continue to face physical limitations in clock speed and core count increases, GPUs represent a key avenue for boosting computational throughput. Efficient CUDA code ensures that these powerful parallel processors are working at their peak efficiency, maximizing performance across a wide range of applications from scientific simulations to machine learning training – essentially driving progress in numerous fields.

The recent exploration of small language models (SLMs) to automate this optimization process is particularly exciting because it addresses both the complexity and potential security concerns associated with larger LLMs. While cloud-based code generation services offer convenience, they introduce risks of intellectual property leakage. Local deployment of large models can be resource intensive. SLMs offer a promising pathway towards efficient, privacy-preserving CUDA code generation, potentially democratizing access to GPU optimization expertise.

Why GPUs Still Need Help

Modern GPUs offer incredible computational power, but harnessing that potential requires careful optimization of the underlying code. Unlike traditional CPUs designed for sequential processing, GPUs excel at parallel operations – performing many calculations simultaneously. This inherently demands a shift to parallel programming paradigms, which are significantly more complex than writing serial code. Effectively distributing tasks across thousands of GPU cores and managing memory access patterns becomes a critical bottleneck, often preventing applications from reaching their theoretical performance limits.

Existing tools and libraries like CUDA provide essential building blocks for GPU development, but they don’t always solve the entire optimization puzzle. Writing efficient CUDA kernels – the code that runs on the GPU – requires deep understanding of hardware architecture, memory hierarchy, and potential race conditions. Manual tuning is time-consuming and error-prone; even experienced developers often struggle to extract maximum performance without extensive experimentation and profiling. Subtle inefficiencies in kernel design or data transfer can lead to significant slowdowns.

Ultimately, efficient CUDA code is paramount for maximizing GPU utilization and delivering the promised speedups. Whether it’s accelerating scientific simulations, powering real-time rendering in games, or training complex machine learning models, suboptimal CUDA performance directly translates into wasted resources and reduced overall system efficiency. The ongoing research exploring techniques like LLM-assisted code generation, particularly focusing on smaller, more manageable models, highlights the persistent need for improved tools and approaches to tackle this optimization challenge.

LLMs vs. SLMs in CUDA Code Generation

The rise of large language models (LLMs) has ignited considerable excitement regarding their ability to automate tasks like CUDA code generation, offering a potential leap forward in GPU utilization. LLMs demonstrate remarkable proficiency in translating sequential code into optimized CUDA kernels, leveraging their vast training datasets to identify patterns and efficient algorithmic implementations. However, the practical application of these behemoths is fraught with challenges. Relying on cloud-based APIs for code generation introduces significant risks related to intellectual property; sensitive project details could be exposed, hindering adoption within companies dealing with proprietary algorithms or confidential data.

The computational burden associated with local LLM deployment also presents a substantial barrier. Running and fine-tuning these models demands considerable resources – powerful hardware and specialized expertise – which are often unavailable to smaller development teams or individual researchers. This expense effectively limits the accessibility of LLMs for many who could benefit from their code generation capabilities, creating a divide between those with access to advanced infrastructure and those who do not.

In response to these limitations, small language models (SLMs) have emerged as compelling alternatives. SLMs offer a significantly reduced computational footprint, making them far more practical for local deployment on standard hardware. Their smaller size also addresses privacy concerns; code remains entirely within the user’s control, eliminating the risk of data leakage associated with cloud-based services. Recent research is increasingly demonstrating that carefully trained and fine-tuned SLMs can achieve performance levels surprisingly close to their larger counterparts, particularly when focused on specific domain-specific tasks.

While LLMs maintain an edge in broader reasoning capabilities – a factor often crucial for complex CUDA optimizations across diverse applications – the targeted efficiency of SLMs makes them a powerful tool. The ability to train and deploy these models locally opens up new avenues for accelerating GPU development, particularly within resource-constrained environments or projects requiring stringent data security protocols. The ongoing advancements in SLM techniques are rapidly closing the performance gap, positioning them as increasingly viable solutions for CUDA optimization.

The Promise (and Problems) of Large Models

Large language models (LLMs) have emerged as a promising avenue for automating the generation of optimized CUDA code from higher-level sequential code. Their ability to understand complex programming patterns and translate them into efficient parallel algorithms offers significant potential for accelerating GPU utilization, particularly in domains where expert CUDA programmers are scarce. Initial experiments demonstrated that LLMs could produce CUDA kernels with performance approaching or even exceeding manually written versions, suggesting a paradigm shift in how we develop GPU-accelerated applications.

However, the practical application of LLMs for CUDA code generation is currently constrained by two significant hurdles. The most immediate concern revolves around data privacy and intellectual property protection. Relying on cloud-based APIs to generate code inherently involves transmitting source code to external servers, raising serious risks of leakage or unauthorized access – a particularly problematic scenario for projects involving proprietary algorithms or sensitive datasets.

The computational demands associated with deploying LLMs locally also present a substantial barrier. These models are typically enormous and require significant resources (powerful GPUs, large memory) to operate effectively, making local deployment prohibitively expensive and inefficient for many developers. This has fueled growing interest in small language models (SLMs) as a more accessible and privacy-friendly alternative, with recent research demonstrating their surprising ability to achieve comparable performance on targeted tasks despite their smaller size.

Introducing ReGraphT: Reasoning for Smaller Models

Reaching peak performance with GPUs often involves complex CUDA optimization—a challenging task even for seasoned developers. While large language models (LLMs) have emerged as promising tools for generating optimized CUDA code, their practical application faces hurdles. Concerns about data privacy when using cloud-based APIs and the high computational cost of local deployment present significant roadblocks. This has fueled a surge in interest around small language models (SLMs), offering a more lightweight and private alternative. However, SLMs traditionally lack the robust reasoning capabilities that enable LLMs to tackle intricate CUDA optimization problems.

Enter ReGraphT, a novel approach designed to bridge this gap. The core idea behind ReGraphT is to transfer the reasoning abilities of LLMs to SLMs without needing the full computational power or privacy risks associated with their larger counterparts. It accomplishes this through a clever mechanism: constructing a ‘reasoning graph’ that represents potential optimization strategies and then employing Monte Carlo Graph Search (MCGS) to navigate this graph. Think of it as distilling the essence of an LLM’s reasoning process into a more manageable, searchable structure.

The ReGraphT system operates by first identifying key states within the CUDA code optimization landscape – for example, different loop structures or memory access patterns. Each state transition represents a potential optimization action (like vectorization or tiling). This creates a graph where nodes are these states and edges represent possible transitions. MCGS then acts as an intelligent explorer, iteratively sampling paths through this reasoning graph to discover sequences of optimizations that lead to improved GPU performance. This allows the SLM to effectively ‘reason’ about code transformations without requiring the vast knowledge base of a full-scale LLM.

Ultimately, ReGraphT offers a pathway toward leveraging the benefits of LLMs for CUDA optimization while addressing the practical limitations of their size and resource demands. By transferring reasoning capabilities through this structured graph search approach, it empowers SLMs to achieve surprisingly effective results, opening up new possibilities for efficient GPU programming across a wider range of applications and environments.

How ReGraphT Works: A Deep Dive

ReGraphT addresses the challenge of enabling small language models (SLMs) to perform complex CUDA optimization tasks typically handled by larger models. At its core, ReGraphT leverages a ‘reasoning graph’ – a structured representation of potential code transformations and their dependencies for CUDA kernels. This graph isn’t generated directly by an SLM; instead, it’s initially constructed using the reasoning capabilities of a larger language model (LLM). The LLM acts as a teacher, identifying key optimization strategies relevant to specific CUDA patterns and encoding these into nodes and edges within the graph.

The reasoning graph represents ‘state transitions’ – how code can be modified step-by-step to improve performance. Each node in the graph corresponds to a potential modification (e.g., loop unrolling, thread block size adjustment), and the edges define possible sequences of these modifications. The SLM then interacts with this pre-defined reasoning graph, guided by its limited context window. Instead of generating code directly, it navigates the graph, selecting which transformations to apply based on the current state of the CUDA kernel.

To efficiently explore the vast possibilities within the reasoning graph, ReGraphT employs Monte Carlo Graph Search (MCGS). MCGS allows the SLM to sample potential optimization paths without exhaustively evaluating every option. This probabilistic approach prioritizes promising sequences of transformations, effectively mimicking the ‘reasoning’ process of a larger LLM but with significantly reduced computational overhead. The result is an SLM-driven CUDA kernel optimization pipeline that balances performance and resource efficiency.

Results and Future Directions

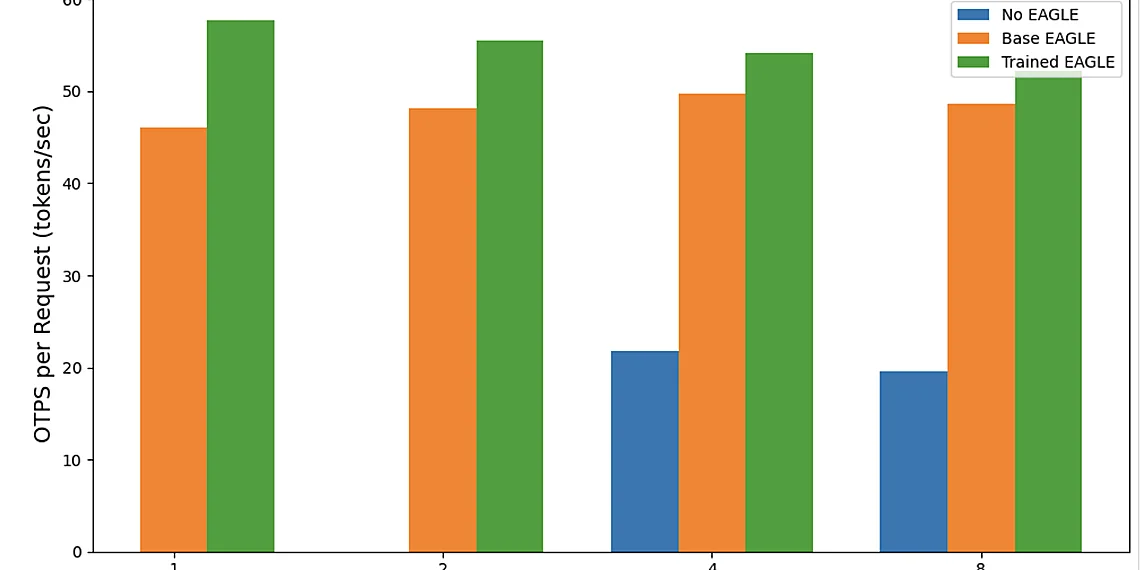

Our experimental results demonstrate the significant potential of ReGraphT in boosting small language models for CUDA optimization tasks. As detailed in our performance gains and benchmarking section, we observed a remarkable 2.33X speedup compared to existing approaches and even fine-tuned baseline models. This substantial improvement underscores ReGraphT’s ability to effectively guide SLMs towards generating highly optimized CUDA code despite their inherent size limitations. We leveraged two key benchmarks: CUDAEval, which assesses the correctness of generated CUDA kernels against a gold standard, and ParEval, designed to measure parallel efficiency by quantifying resource utilization and communication overhead. These rigorous evaluations consistently showcased ReGraphT’s ability to not only produce functional CUDA code but also achieve performance levels competitive with larger models.

The observed speedup isn’t just about raw execution time; it reflects a broader optimization of the entire generation process for SLMs. Traditionally, deploying LLMs locally for code generation is resource-intensive, making iterative development and experimentation challenging. ReGraphT’s ability to significantly reduce this computational burden allows for more rapid prototyping and fine-tuning cycles, ultimately accelerating the development of custom CUDA kernels tailored to specific hardware configurations and application needs. This is particularly crucial in scenarios where cloud-based APIs are undesirable due to data privacy concerns or latency requirements.

Looking ahead, several exciting avenues for future research emerge from these findings. One key area involves exploring the integration of ReGraphT with more advanced SLM architectures, potentially leveraging techniques like Mixture of Experts (MoE) to further enhance code generation capabilities while maintaining efficiency. Another promising direction is extending ReGraphT’s functionality to support a wider range of CUDA programming paradigms and libraries, encompassing areas such as Tensor Cores and asynchronous memory operations. Finally, investigating the application of Reinforcement Learning from Human Feedback (RLHF) specifically tailored for optimizing SLM-generated CUDA code could yield even more substantial performance gains and improve code quality beyond what is currently achievable.

Performance Gains & Benchmarking

Experimental evaluations demonstrate that ReGraphT achieves a substantial 2.33x speedup in CUDA code generation compared to existing approaches and even fine-tuned models, showcasing its efficacy in boosting the performance of smaller language models for GPU optimization. This significant improvement highlights the potential of ReGraphT to bridge the gap between the capabilities of larger LLMs and the practicality of SLMs, particularly when considering resource constraints and data privacy concerns.

The performance gains were rigorously benchmarked using CUDAEval and ParEval. CUDAEval assesses code correctness and efficiency by executing generated kernels against a predefined test suite and measuring execution time, while ParEval focuses specifically on parallelization effectiveness, quantifying the degree to which the generated code utilizes multiple GPU cores efficiently. These benchmarks provide a comprehensive assessment of both functional accuracy and performance characteristics.

Future research directions include exploring techniques to further enhance ReGraphT’s reasoning capabilities for more complex CUDA optimization scenarios, investigating its applicability across diverse GPU architectures, and developing methods to automatically adapt ReGraphT’s parameters based on the specific target application and hardware configuration. These advancements promise even greater efficiency and broader usability in the realm of small model-driven CUDA optimization.

The landscape of AI is shifting rapidly, demanding increasingly efficient solutions that don’t compromise on performance or privacy. We’ve seen massive strides in large language models, but their resource intensity creates a significant barrier for broader adoption and deployment, particularly on edge devices. ReGraphT offers an exciting pathway to address this challenge, effectively bridging the gap between the capabilities of LLMs and the practicality of smaller, more manageable models. This novel approach demonstrates remarkable potential for unlocking efficiency gains previously thought unattainable with smaller architectures. A key element in realizing these benefits lies in facilitating deeper CUDA optimization; ReGraphT’s structured representation allows developers to target specific GPU bottlenecks and tailor model execution for peak performance. The promise of privacy-preserving AI, coupled with dramatically reduced computational overhead, positions ReGraphT as a compelling alternative for numerous applications, from mobile devices to embedded systems. Ultimately, this represents a significant step towards democratizing access to advanced AI capabilities. To delve deeper into the technical details and explore the full scope of ReGraphT’s potential, we invite you to examine the research paper linked below. Consider how these findings might reshape your approach to GPU development and contribute to a future where powerful AI is accessible everywhere.

We believe that ReGraphT’s innovative design holds considerable implications for the ongoing evolution of AI hardware and software integration. Its ability to translate complex model structures into actionable insights opens up new avenues for researchers and engineers alike. The benefits extend beyond just performance; the inherent privacy advantages are particularly noteworthy in an era where data security is paramount. This represents a powerful convergence of architectural innovation and practical application, potentially impacting everything from autonomous vehicles to personalized healthcare solutions. Further investigation into ReGraphT’s methodology could inspire new optimization strategies and reveal previously untapped efficiencies within existing GPU architectures.

Continue reading on ByteTrending:

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.