The relentless surge of data and applications continues to push cloud infrastructure to its absolute limits, demanding ever more resources and driving up operational costs for businesses across every sector. We’re seeing a constant need to do *more* with *less*, a challenge that traditional methods are struggling to address effectively. This isn’t just about scaling; it’s about fundamentally rethinking how we utilize these powerful cloud environments. Increasingly, organizations are grappling with wasted resources and unpredictable performance bottlenecks despite significant investment. The good news? A transformative solution is emerging, leveraging the very technology driving many of those challenges: artificial intelligence. We’re entering an era where AI isn’t just *running* in the cloud; it’s actively optimizing its performance. This article dives deep into how **Cloud AI Optimization** is revolutionizing cloud efficiency, detailing practical strategies and real-world examples that are reshaping how businesses manage their digital landscapes.

Imagine a system that proactively identifies and eliminates resource waste, dynamically adjusts compute power based on demand, and predicts potential outages before they impact your operations. That’s the promise of intelligent cloud management, and it’s moving from futuristic concept to everyday reality. We’ll explore specific AI techniques – from machine learning algorithms analyzing usage patterns to reinforcement learning automating complex configurations – demonstrating how these tools are unlocking unprecedented levels of efficiency. Forget reactive troubleshooting; embrace a proactive, data-driven approach that ensures your cloud infrastructure is operating at peak performance and delivering maximum value.

The Cloud’s Efficiency Bottleneck

Traditional cloud management, while revolutionary in its initial promise, has quietly developed an efficiency bottleneck. The very nature of on-demand resource allocation – the core principle of cloud computing – often leads to significant waste. When businesses provision virtual machines (VMs) and scale resources based on anticipated peak loads, they frequently end up over-allocating capacity for extended periods. Imagine a retail website expecting a surge during Black Friday: operators might spin up dozens of extra VMs well in advance, only to have them sit idle for weeks afterward, consuming power and incurring costs without providing any value.

This manual process is inherently reactive and imprecise. IT teams rely on historical data and educated guesses, which are rarely perfect predictors of future demand. Furthermore, scaling rules often lag behind actual usage patterns. Consider a startup experiencing rapid growth; their initial estimates for server capacity might quickly become obsolete as user adoption explodes. Scaling up to handle the immediate load is crucial, but downscaling those resources later – identifying underutilized VMs and deallocating them – requires constant monitoring and manual intervention that’s prone to human error and delays.

The consequences of this inefficiency extend beyond just wasted money; it also impacts environmental sustainability. Over-provisioned servers contribute unnecessarily to carbon emissions. Moreover, the complexity of managing these resources manually creates a significant operational burden on IT departments, diverting valuable time and expertise away from more strategic initiatives. These challenges highlight a clear need for a smarter, more adaptive approach to cloud resource management – one that moves beyond reactive scaling and embraces proactive optimization.

Common pitfalls include ‘set it and forget it’ scaling rules which don’t adapt to changing workloads, neglecting right-sizing VMs (choosing the optimal VM size based on actual needs), and failing to leverage auto-scaling features effectively. These practices lead to a persistent state of resource overspending, impacting both the bottom line and overall operational efficiency.

Manual Management & Resource Waste

Historically, managing virtual machines (VMs) and other cloud resources has been a largely manual process. System administrators would provision VMs based on anticipated peak demand, often erring on the side of caution to avoid performance issues. This ‘over-provisioning’ leads directly to wasted resources; a server allocated for potential spikes might sit idle or lightly utilized most of the time. For example, a development environment requiring 20 servers during testing might be provisioned with 30, simply to handle infrequent bursts, resulting in significant CPU and memory wastage.

Scaling, too, frequently suffers from similar inefficiencies. Manual scaling rules often rely on simplistic metrics like average CPU utilization or queue length. These rules struggle to accurately predict demand fluctuations, leading to either underscaling (causing performance degradation) or overscaling (wasting resources). Consider an e-commerce site experiencing a predictable weekly spike in traffic on Sunday evenings; a rule based solely on daily averages might not adequately account for this surge, requiring costly and reactive scaling interventions. This reactive approach is inherently less efficient than proactively adjusting resource allocation.

A common pitfall is the ‘set it and forget it’ mentality regarding VM sizing. Initially sized based on a specific workload profile, VMs may remain unchanged even as application needs evolve or traffic patterns shift. A database server initially provisioned for 100 concurrent connections might later handle only 50, yet retain its oversized allocation. This rigidity prevents dynamic resource optimization and contributes to ongoing cloud cost inefficiencies. Regular audits and adjustments are crucial but often overlooked due to time constraints and the complexity of analyzing historical usage data.

Google’s AI-Powered Approach

Google has long been a pioneer in leveraging artificial intelligence, and their efforts are now significantly impacting the efficiency of their massive cloud infrastructure. Their ‘Cloud AI Optimization’ approach isn’t just about general improvements; it’s a deeply integrated system using machine learning to proactively manage resources and minimize waste. At its core, this involves constantly analyzing vast amounts of data – everything from CPU utilization and memory consumption to network latency and power usage – across their global datacenters. This real-time analysis allows Google’s AI models to predict future resource needs with increasing accuracy.

A key component of this system is the application of reinforcement learning (RL) for virtual machine (VM) placement. Imagine a constantly shifting puzzle where VMs representing different workloads need to be positioned on physical servers. Traditional approaches often rely on static rules or simple heuristics, leading to underutilized resources and potential bottlenecks. Google’s RL agents, however, learn through trial and error – receiving ‘rewards’ when they place VMs in optimal locations (e.g., maximizing resource utilization, minimizing latency) and ‘penalties’ for poor placements. This iterative process allows the system to discover highly efficient VM placement strategies that would be practically impossible to design manually.

The technical details are fascinating: these RL agents aren’t optimizing for a single metric; they consider a complex interplay of factors, including power consumption (crucial for sustainability), network bandwidth, and even hardware degradation. Furthermore, the system doesn’t just react to immediate demands; it anticipates future workload patterns based on historical data and trends. This predictive capability enables proactive resource allocation, preventing performance slowdowns before they occur. While the underlying algorithms are sophisticated – involving deep neural networks and complex reward functions – Google’s implementation aims for a level of automation that requires minimal human intervention.

Ultimately, Google’s Cloud AI Optimization represents a shift towards self-managing cloud infrastructure. By embedding intelligent decision-making directly into the system, they are not only reducing operational costs but also improving overall performance and sustainability. This approach is setting a new standard for cloud efficiency and demonstrating the transformative potential of AI in optimizing complex distributed systems – a trend we expect to see increasingly adopted by other major cloud providers as well.

Reinforcement Learning for VM Placement

Google utilizes reinforcement learning (RL) to dynamically optimize Virtual Machine (VM) placement within its data centers. Traditional VM placement often relies on static rules or simple heuristics, which can lead to inefficient resource utilization and performance bottlenecks. RL offers a more adaptive approach by treating VM placement as a sequential decision-making problem. An ‘agent’ – the RL algorithm – observes the current state of the cluster (workload demands across VMs, hardware capabilities like CPU and memory, network conditions) and then takes an action: moving a VM from one physical server to another.

The core idea is that the agent learns through trial and error. After each placement decision, it receives a ‘reward’ signal. A positive reward might be given for improved resource utilization (e.g., reducing CPU load on overloaded servers), reduced latency for users accessing applications, or lower energy consumption. Conversely, negative rewards are assigned for actions that degrade performance or increase costs. Over time, the agent iteratively refines its placement strategy to maximize cumulative rewards, effectively learning optimal VM placements based on the observed patterns and historical data.

Importantly, Google’s implementation doesn’t involve hand-coded rules dictating every possible action. Instead, a neural network serves as the ‘brain’ of the RL agent, approximating the complex relationship between cluster state and optimal actions. This allows the system to generalize to unseen scenarios and adapt quickly to changing workloads, unlike rule-based systems that require manual updates. The process is largely automated, requiring minimal human intervention after initial training.

Benefits & Real-World Impact

The integration of AI for cloud optimization isn’t just a theoretical exercise; it’s delivering tangible benefits across several key areas. Organizations are seeing significant cost reductions, driven by smarter resource allocation and elimination of waste. Google’s internal research, for example, has demonstrated the power of these algorithms to dramatically reduce operational expenses while simultaneously boosting performance. We’re talking about optimizing virtual machine (VM) utilization rates – ensuring that resources aren’t sitting idle when they could be actively contributing to workload processing. This directly translates into fewer machines needed to handle the same level of demand, a substantial impact on cloud spending.

Beyond cost savings, AI-powered optimization is fueling performance improvements for cloud applications. Predictive analytics allow systems to anticipate fluctuating demands and proactively adjust resource provisioning, avoiding bottlenecks and latency issues that can frustrate users. Machine learning models are constantly analyzing patterns in application behavior and infrastructure health, enabling dynamic adjustments to scaling policies and workload placement. This results in faster response times, increased throughput, and an overall enhanced user experience – vital for businesses relying on cloud services to power their operations.

The positive impact extends beyond the purely economic and performance-related aspects; AI optimization is also proving crucial for achieving sustainability goals within the cloud computing space. The reduced demand for hardware directly correlates with lower energy consumption in data centers, a significant contributor to carbon emissions. By maximizing resource utilization and minimizing waste, Cloud AI Optimization allows companies to reduce their environmental footprint while maintaining or even improving service levels. This aligns perfectly with the growing global emphasis on responsible technology practices.

Ultimately, the real-world impact of Cloud AI Optimization showcases a compelling convergence of economic viability, performance enhancement, and environmental responsibility. As these techniques mature and become more widely adopted, we can expect to see increasingly sophisticated solutions that further refine cloud infrastructure management – leading to even greater efficiency gains and a more sustainable future for digital services.

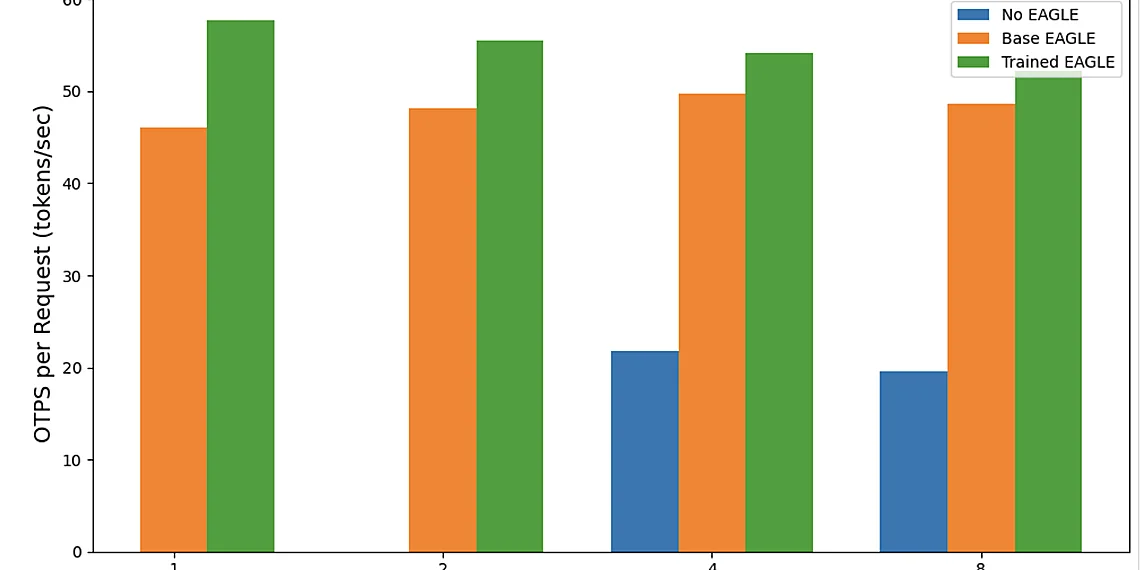

Quantifiable Improvements: Cost & Performance

Google’s research has consistently demonstrated significant operational cost reductions through the application of AI to cloud infrastructure management. Specifically, their work on automated VM placement and resource allocation using reinforcement learning has yielded impressive results. One study found that employing AI-driven scheduling reduced overall operational costs by approximately 30% compared to traditional rule-based systems. This reduction stems from more efficient utilization of available compute resources and minimized idle capacity.

Beyond cost savings, Cloud AI Optimization also translates directly into improved performance metrics. Google’s research indicates a substantial increase in Virtual Machine (VM) utilization rates – often exceeding 60% with AI-powered management, compared to typical rates around 35-45% for manually managed or rule-based systems. This higher utilization means more work is being accomplished with the same underlying infrastructure, leading to faster application response times and improved user experience. The ability of AI algorithms to dynamically adapt to changing workloads ensures resources are allocated precisely where and when they’re needed.

The benefits extend beyond immediate financial gains and performance boosts; these improvements contribute to increased sustainability within cloud operations. By minimizing wasted compute power, AI-driven optimization reduces the overall energy consumption associated with running large-scale cloud infrastructure. This aligns with growing industry efforts to reduce carbon footprints and promote environmentally responsible computing practices. Google’s internal data suggests a potential reduction in electricity usage proportional to the cost savings achieved through these AI optimizations.

Sustainability Implications

The increasing energy demands of cloud computing are a growing concern. Data centers consume vast amounts of electricity to power servers, cooling systems, and networking equipment. However, recent advancements in Cloud AI Optimization offer a significant pathway towards mitigating this impact. By intelligently managing resource allocation – dynamically adjusting CPU utilization, memory allocation, and even server placement based on real-time demand – AI algorithms are demonstrably reducing the overall energy footprint of cloud infrastructure.

Specifically, optimized cloud environments powered by AI often exhibit substantial reductions in Power Usage Effectiveness (PUE), a key metric for data center efficiency. A lower PUE indicates that less power is being wasted on overhead like cooling and more is directly powering computing workloads. Studies have shown that implementing AI-driven resource management can decrease PUE by as much as 15-30%, translating to significant energy savings annually. This reduction in electricity consumption has a direct positive impact on carbon emissions, contributing to broader sustainability goals.

Beyond simply reducing power usage, Cloud AI Optimization also allows for more efficient hardware utilization. By identifying and consolidating underutilized servers or migrating workloads to regions with lower energy costs (e.g., those leveraging renewable sources), cloud providers can minimize waste and maximize the environmental benefits of their operations. This proactive approach to resource management represents a crucial step towards building a more sustainable future for cloud computing.

The Future of Cloud Management

The rise of Cloud AI Optimization isn’t merely about squeezing a few extra percentage points from existing infrastructure; it represents a fundamental shift in how we conceptualize and manage cloud resources. Historically, cloud management has relied on rule-based systems and human intervention – processes that are inherently reactive and often struggle to adapt to the dynamic nature of modern workloads. AI offers a proactive and adaptive approach, capable of analyzing vast datasets in real-time and making intelligent decisions about resource allocation, power consumption, and overall system performance. This move towards automated intelligence promises not just cost savings but also increased resilience and improved developer productivity.

Looking ahead, we can anticipate even more sophisticated applications of AI within cloud environments. Imagine a future where AI agents autonomously negotiate resource contracts with different cloud providers, dynamically shifting workloads to optimize for price and performance based on real-time market conditions. We’ll likely see AI powering predictive maintenance across entire data center ecosystems, anticipating hardware failures *before* they impact services. The integration of generative AI models could even lead to the automated design and optimization of custom cloud architectures tailored to specific business needs – a level of personalization previously unimaginable.

Beyond VM Placement (as discussed in a related section), the scope for AI’s influence is expanding rapidly. Auto-scaling will evolve from simple threshold-based adjustments to intelligent predictions based on complex behavioral patterns. Anomaly detection will become increasingly granular, identifying subtle deviations that indicate potential security threats or performance bottlenecks. Furthermore, AI can be leveraged to automate tedious operational tasks, freeing up human engineers to focus on innovation and strategic initiatives. This isn’t about replacing humans; it’s about augmenting their capabilities and creating a more efficient and responsive cloud ecosystem.

Ultimately, the future of cloud management is inextricably linked with advancements in artificial intelligence. While challenges remain – including data privacy concerns, algorithmic bias mitigation, and the need for explainable AI – the potential benefits are too significant to ignore. Cloud AI Optimization isn’t just a trend; it’s a necessary evolution that will define the next generation of scalable, resilient, and intelligent cloud infrastructure.

Beyond VM Placement: Expanding AI’s Role

While initial applications of AI in cloud management largely focused on virtual machine placement – dynamically assigning VMs to physical servers based on workload demands – the scope of Cloud AI Optimization is rapidly expanding. Modern cloud environments are incredibly complex, involving numerous interconnected services and intricate configurations. AI algorithms are now being deployed to optimize auto-scaling policies, learning from historical data and real-time metrics to predict resource needs more accurately than traditional rule-based approaches. This results in reduced costs by avoiding over-provisioning while simultaneously maintaining application performance and availability.

Beyond simple scaling, AI is proving invaluable for anomaly detection within cloud infrastructure. Machine learning models can be trained on vast datasets of operational logs and performance metrics to identify unusual patterns that might indicate underlying problems – from failing hardware components to security breaches. These proactive alerts allow DevOps teams to address issues before they escalate into major outages or data compromises, significantly improving overall system resilience and reducing mean time to resolution (MTTR). The ability to correlate events across diverse cloud services is a key advantage of AI-powered anomaly detection.

Looking ahead, we can anticipate even more sophisticated applications of Cloud AI Optimization. Generative AI models may soon automate the creation and optimization of entire cloud architectures, tailoring them precisely to specific business needs. Furthermore, AI could play a crucial role in bolstering cloud security by proactively identifying and mitigating vulnerabilities, essentially acting as an ‘always-on’ security expert constantly learning and adapting to new threats. The integration of reinforcement learning techniques promises further automation and refinement of these processes, continuously improving cloud efficiency and reducing the burden on human operators.

The journey we’ve taken through this article clearly demonstrates that artificial intelligence isn’t just a futuristic concept; it’s actively reshaping the landscape of cloud computing today.

We’ve seen how AI-powered tools are streamlining resource allocation, predicting performance bottlenecks, and automating complex management tasks – all leading to significant cost savings and improved operational efficiency.

The power to proactively manage your cloud environment rather than reactively addressing issues is a game changer for businesses of all sizes.

A crucial element driving this evolution is Cloud AI Optimization, allowing organizations to fine-tune their cloud infrastructure for peak performance while minimizing waste and maximizing return on investment. This represents a significant shift from traditional management approaches, offering unprecedented levels of control and insight. The potential for increased agility and innovation through these advancements cannot be overstated; businesses that embrace these technologies will likely gain a considerable competitive advantage in the years to come. Ultimately, this is about empowering your teams with smarter tools and freeing them up to focus on strategic initiatives rather than tedious manual processes. Consider the possibilities – what could your organization achieve with optimized cloud resources and predictive insights? To delve deeper into these concepts and explore specific implementation strategies, we’ve compiled a list of valuable resources linked below. We encourage you to examine how these advancements might be applied within your own organizations and begin planning for a future where AI and cloud computing work in perfect synergy.

Continue reading on ByteTrending:

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.