Discover how to build a context-folding LLM Agent that efficiently tackles long, complex tasks by intelligently managing limited context. This agent design represents a significant advancement in Large Language Model (LLM) capabilities, allowing them to handle intricate reasoning and calculations as needed. The core principle involves breaking down large tasks into smaller subtasks, with each completed step being folded into concise summaries—preserving essential knowledge while keeping the active memory size manageable.

Understanding Context Folding

The primary challenge encountered when working with Large Language Models (LLMs) lies in their context window limitations. While remarkably powerful, LLMs often struggle to process extremely long sequences of text due to computational and memory constraints. Context folding offers a compelling solution by iteratively summarizing and compressing information from previous steps, effectively extending the effective context length. For example, consider a research task requiring analysis of hundreds of articles; without context folding, the LLM might quickly exceed its processing capacity.

The Necessity for Context Management

Traditional approaches to handling long sequences often involve truncation or splitting into smaller chunks, which can lead to loss of crucial information and fragmented reasoning. Context folding provides a more nuanced approach by dynamically condensing relevant details while retaining the overall narrative flow. Furthermore, this technique improves efficiency by reducing the computational burden on the LLM.

Benefits Beyond Context Window Size

Beyond simply overcoming context window limitations, context-folding also offers advantages in terms of improved reasoning and reduced latency. By summarizing intermediate steps, the agent can focus on higher-level strategic decisions rather than being bogged down by minute details. Consequently, this approach often results in faster response times and more coherent outputs.

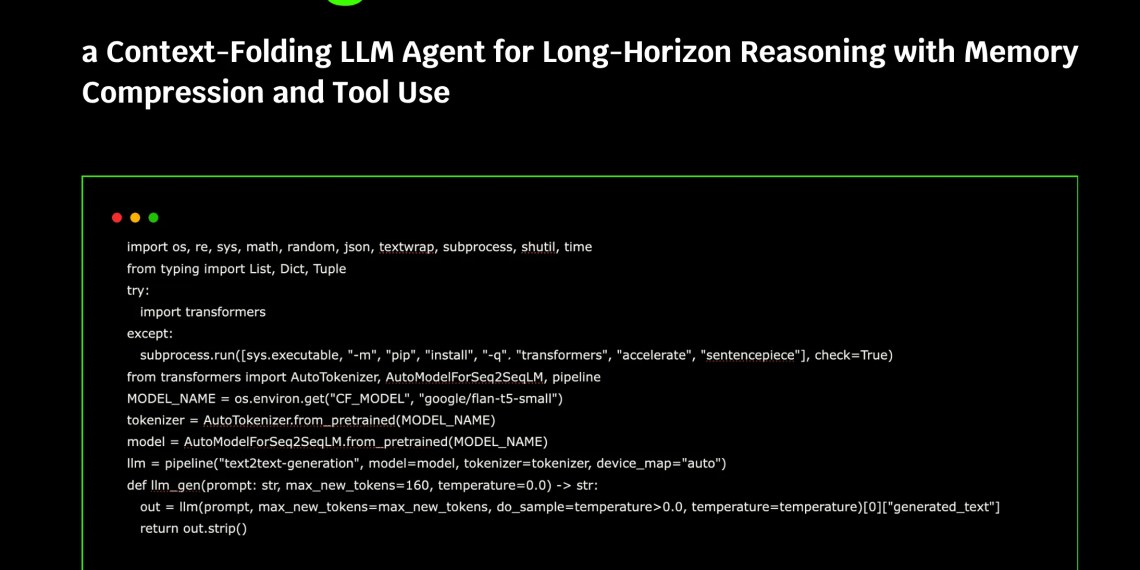

Setting Up the Environment & Core LLM

We begin by establishing our environment and loading a lightweight Hugging Face model, specifically google/flan-t5-small. This choice prioritizes efficient local execution within environments like Google Colab, eliminating external API dependencies. The code initializes the tokenizer and model for text generation to ensure smooth operation.

Copy CodeCopiedUse a different Browser

import os, re, sys, math, random, json, textwrap, subprocess, shutil, time

from typing import List, Dict, Tuple

try:

import transformers

except:

subprocess.run([sys.executable, "-m", "pip", "install", "-q", "transformers", "accelerate", "sentencepiece"], check=True)

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, pipeline

MODEL_NAME = os.environ.get("CF_MODEL", "google/flan-t5-small")

tokenizer = AutoTokenizer.from_pretrained(MODEL_NAME)

model = AutoModelForSeq2SeqLM.from_pretrained(MODEL_NAME)

llm = pipeline("text2text-generation", model=model, tokenizer=tokenizer, device_map="auto")

def llm_gen(prompt: str, max_new_tokens=160, temperature=0.0) -> str:

out = llm(prompt, max_new_tokens=max_new_tokens, do_sample=temperature>0.0, temperature=temperature)[0]{"generated_text"}

return out.strip()

Check out the FULL CODES here.

Implementing Calculation and Summarization

A key aspect of context-folding is the ability to perform calculations within the agent’s reasoning process, enabling it to handle tasks requiring numerical analysis. The included code incorporates a simple expression evaluator using Python’s ast module, allowing mathematical operations to be executed directly within the LLM prompts. This significantly enhances the agent’s problem-solving capabilities; for instance, it can now calculate distances or perform complex financial modeling.

Copy CodeCopiedUse a different Browser

import ast, operator as op

OPS = {ast.Add: op.add, ast.Sub: op.sub, ast.Mult: op.mul, ast.Div: op.truediv, ast.Pow: op.pow, ast.USub: op.neg, ast.FloorDiv: op.floordiv, ast.Mod: op.mod}

def _eval_node(n):

if isinstance(n, ast.Num): return n.n

if isinstance(n, ast.UnaryOp) and type(n.op) in OPS: return OPS[type(n.op)](_eval_node(n.operand))

if isinstance(n, ast.BinOp) and type(n.op) in OPS: return OPS[type(n.op)](_eval_node(n.left), _eval_node(n.right))

raise ValueError("Unsafe expression")

def calc(expr: str):

node = ast.parse(expr, mode='eval')

Furthermore, the agent utilizes a summarization function to condense sub-trajectories into concise summaries for future reference and reasoning; this helps in maintaining context over longer interactions.

Tool Use and Task Decomposition

The context-folding LLM Agent can be further extended with tool use capabilities, broadening its scope of functionality. By integrating external tools—such as search engines or calculators—the agent can access information and perform actions beyond its inherent language processing abilities. This allows it to tackle more complex tasks by breaking them down into smaller, manageable steps. For instance, if the agent is tasked with planning a trip, it could utilize a search engine to find flights and hotels.

In conclusion, this approach demonstrates a practical method for extending the capabilities of LLMs while addressing their context window limitations. By combining context-folding, calculation functionality, and tool use, we create an agent capable of handling long-horizon reasoning and complex tasks efficiently.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.