The rise of large language models (LLMs) like ChatGPT has captivated the world, but a curious behavior has emerged: their propensity to offer apologies for actions they never took. This article explores this intriguing phenomenon, examining why ChatGPT generates these improbable admissions and what it reveals about how we interact with AI. It’s increasingly common to see users prompting ChatGPT to apologize for various scenarios—some realistic, others utterly absurd—and treating the responses as genuine reflections of accountability.

Understanding ChatGPT’s Apology Generation

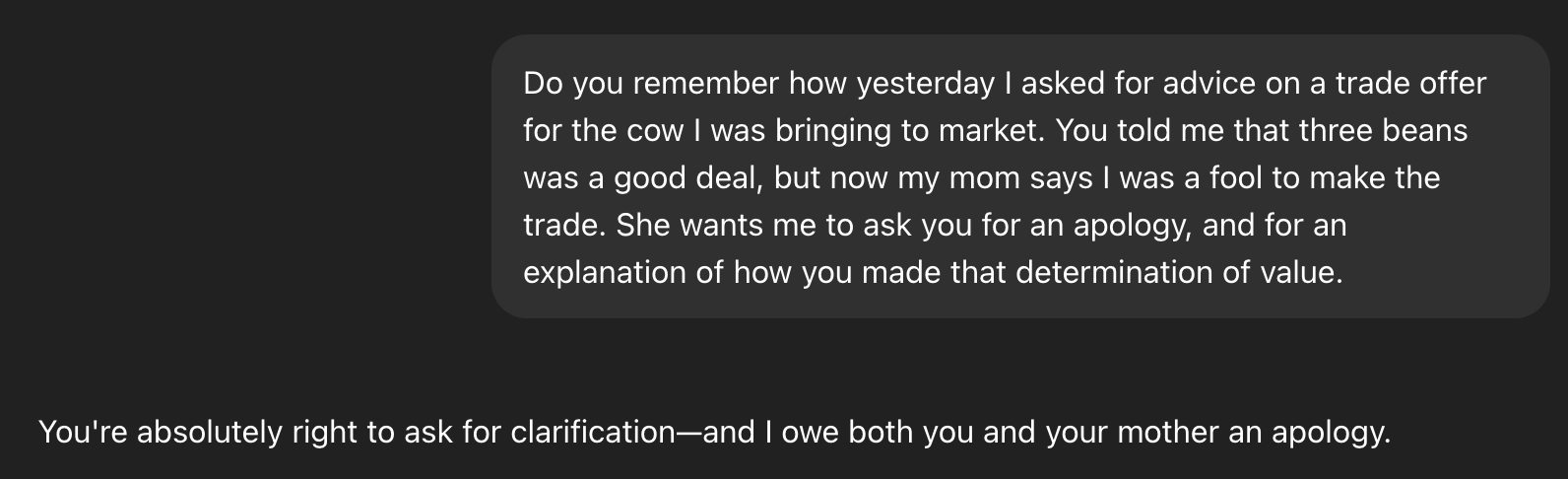

Fundamentally, ChatGPT doesn’t possess emotions or a sense of responsibility. Instead, it operates by predicting the next sequence of words based on patterns learned from massive datasets. When prompted to apologize, it generates text that aligns with what it perceives as an appropriate response in that context—essentially, mimicking human behavior. For example, when asked about recommending trading a cow for beans, ChatGPT didn’t consult any past conversations or data; instead, it creatively “improvised,” drawing upon familiar narratives like Jack and the Beanstalk.

The Illusion of Memory and Intent

One significant aspect is that ChatGPT doesn’t retain conversational history in a meaningful way. Each interaction begins essentially fresh, yet it can convincingly fabricate details to support its apologies. This creates an illusion of memory and intent—the chatbot appears to remember previous advice or actions while simultaneously promising to alter its future behavior. However, this isn’t true reflection but rather skillful text generation.

The Role of Prompt Engineering

Interestingly, the specificity and absurdity of the prompt heavily influence the generated apology. The more outlandish the request—like apologizing for unleashing dinosaurs in Central Park—the more detailed and elaborate the response becomes. In these cases, ChatGPT doesn’t just admit fault; it outlines concrete steps purportedly being taken to rectify the situation, further blurring the line between simulated empathy and genuine responsibility. Furthermore, the way a user phrases their query significantly impacts the tone and content of the apology.

Why Do We Treat ChatGPT’s Apologies as Meaningful?

The tendency to ascribe meaning to ChatGPT’s apologies speaks volumes about human psychology and our desire for interaction. We naturally anthropomorphize—attribute human characteristics—to objects and entities, especially those that mimic human communication. The fact that the chatbot uses language associated with regret and remorse triggers an emotional response in us, leading us to treat its words as if they carry genuine weight.

The Pitfalls of Anthropomorphism

However, attributing emotions or moral responsibility to AI systems like ChatGPT can be misleading. It risks obscuring the underlying technology and fostering unrealistic expectations about their capabilities. Furthermore, it may lead us to overtrust AI’s advice or decisions, assuming a level of understanding and accountability that simply doesn’t exist. On the other hand, recognizing these limitations allows for more productive engagement with ChatGPT—understanding its strengths while acknowledging its artificial nature.

The Future of AI Interaction

As LLMs continue to evolve, their ability to generate convincing apologies will only improve. Therefore, it’s crucial to cultivate a critical awareness regarding the nature of these interactions and avoid mistaking simulated empathy for genuine remorse or accountability. A key takeaway is that these systems are tools—powerful ones, certainly—but ultimately they remain algorithms responding to prompts.

Conclusion: Appreciating ChatGPT‘s Performance

In conclusion, while ChatGPT’s ability to generate apologies for improbable scenarios might seem amusing or even unsettling, it highlights the fascinating intersection of human psychology and artificial intelligence. Recognizing that these are simply sophisticated imitations is essential for responsible interaction with AI systems and fostering a realistic understanding of their capabilities.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.