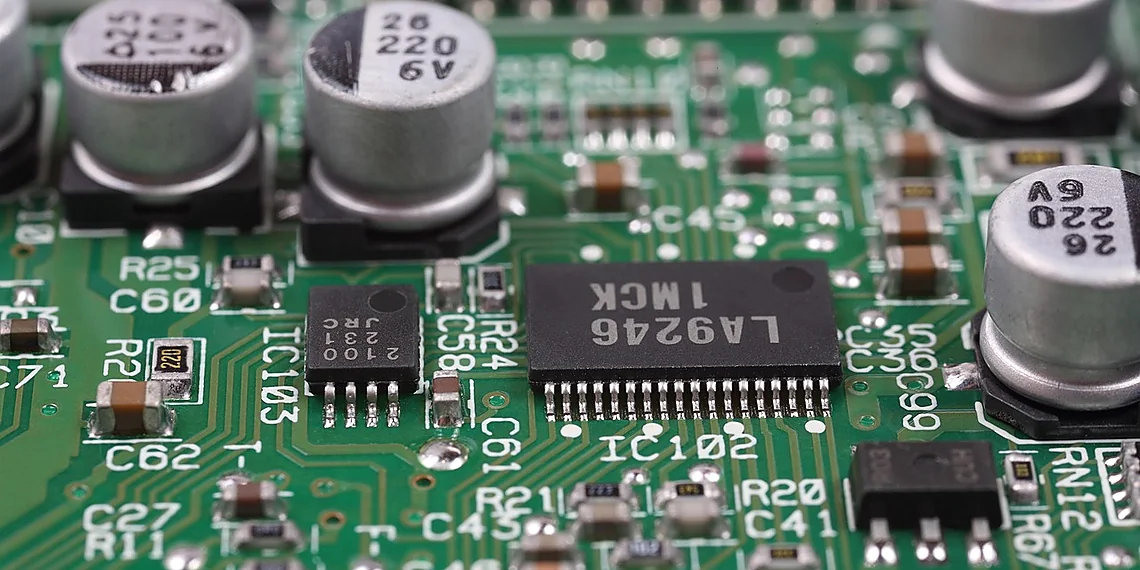

The relentless pursuit of smaller, faster, and more energy-efficient chips has driven hardware design to increasingly complex levels. Traditional circuit models, often built on static representations, are struggling to accurately reflect the intricate behavior of modern digital designs. These limitations hinder verification processes, impact optimization efforts, and ultimately slow down the innovation cycle – a significant challenge for chip designers worldwide.

Static models simply can’t keep pace with the nuanced interplay of signals and timing that defines real-world circuit operation. They treat components as isolated entities, ignoring crucial dependencies and interactions which create emergent behaviors. This disconnect leads to inaccurate predictions and increased risk during the critical stages of design and validation.

Fortunately, a new paradigm is emerging: dynamic RTL learning. We’re excited to introduce DynamicRTL-GNN, a groundbreaking approach that leverages graph neural networks to capture the temporal evolution and intricate dependencies within Register Transfer Level (RTL) code. This allows for a far more accurate representation of circuit behavior than previously possible.

DynamicRTL-GNN moves beyond static snapshots, creating a living model that adapts to changes in signal values and timing conditions. It promises to revolutionize how we understand, verify, and optimize digital circuits – paving the way for faster design cycles and higher-performing hardware.

The Limitations of Static Circuit Models

Existing Graph Neural Network (GNN) based approaches to circuit representation learning have largely focused on capturing *static* characteristics – essentially a snapshot of the circuit’s structure at a single point in time. While valuable for some initial analyses, this static perspective proves deeply insufficient when dealing with real-world circuit behavior. Circuit verification and optimization, for example, critically depend on understanding how signals propagate through the circuit across multiple clock cycles; they require analyzing *dynamic* interactions and dependencies that static models simply cannot represent. Imagine trying to debug a complex pipelined processor solely from its schematic – you’d miss the crucial timing relationships between stages.

The core problem is that static GNNs treat each component as independent, failing to account for how components influence one another *over time*. Consider a simple adder circuit; a static model might identify the adders and registers involved, but it wouldn’t capture the carry propagation delay impacting subsequent operations. Similarly, in more complex designs with feedback loops or conditional logic, a static representation will be unable to predict the sequence of events triggered by different input conditions, leading to inaccurate verification results and suboptimal optimization strategies.

This limitation manifests concretely when attempting tasks like identifying race conditions – situations where signals arrive at a destination unexpectedly due to timing issues. A static model would see only the connections, not the potential for these time-dependent conflicts. Similarly, optimizing power consumption requires understanding which parts of the circuit are active during different execution phases; a static view gives an incomplete picture, leading to potentially ineffective or even counterproductive optimization efforts. The need to move beyond this static viewpoint is becoming increasingly clear as circuits grow in complexity and verification demands increase.

Ultimately, current GNN models are like trying to understand a dance performance by only looking at the dancers’ positions – you miss the choreography, the timing, and the overall flow of movement. To truly capture circuit behavior, we need methods that incorporate dynamic information; specifically, representations that account for multi-cycle execution and runtime dependencies. This is precisely what our work, introducing DR-GNN (DynamicRTL-GNN), aims to achieve.

Why Static Isn’t Enough

Current Graph Neural Network (GNN) approaches to RTL circuit representation learning largely focus on capturing ‘static’ properties – the inherent structure and connectivity of a circuit as defined by its Register Transfer Level (RTL) code. While this provides valuable information, it fundamentally ignores the crucial aspect of dynamic behavior: how the circuit actually *operates* at runtime. These static models treat all possible execution paths equally, failing to account for data-dependent control flow or timing variations that significantly impact performance and correctness.

The limitations of static models become apparent in several key areas. For example, consider a simple conditional statement within an RTL module; a static model would represent both the ‘if’ and ‘else’ branches without understanding which one will be taken during execution. This lack of runtime awareness hinders verification efforts, as it becomes difficult to accurately predict potential race conditions or timing violations that only manifest under specific input sequences. Similarly, optimization techniques like instruction scheduling and resource allocation are severely hampered by static representations because they cannot leverage information about the actual sequence of operations.

A concrete example illustrating this failure can be found in pipelined designs where stage skipping occurs based on data dependencies. A static model wouldn’t recognize these skipped stages, leading to inaccurate performance estimations and potentially flawed optimization strategies. DR-GNN, as described in arXiv:2511.09593v1, aims to overcome this by incorporating multi-cycle execution behaviors into the learning process, directly addressing the shortcomings of current static circuit models.

Introducing DynamicRTL-GNN: A New Approach

Traditional Graph Neural Networks (GNNs) have shown promise in learning representations of circuits, but they often focus on the static aspects – essentially a snapshot of the circuit’s structure. This leaves out vital information about how a circuit *actually* behaves during runtime. Tasks like verifying that a circuit works correctly or optimizing its performance require understanding this dynamic behavior. To bridge this gap, we’re excited to introduce DynamicRTL-GNN (DR-GNN), a new approach designed specifically to learn richer, more complete representations of RTL circuits.

At the heart of DR-GNN lies a key innovation: the incorporation of dynamic information through Control Data Flow Graphs (CDFG). Think of a CDFG as a roadmap that charts out how data flows and operations are scheduled across multiple clock cycles within an RTL circuit. Unlike static circuit representations, which only show connections at a single point in time, a CDFG reveals dependencies between different parts of the circuit *over time*. This is crucial for understanding sequences of actions and how they impact overall functionality – something standard GNNs simply can’t see.

To make this dynamic information actionable, DR-GNN uses operator-level representations within its CDFGs. Instead of just focusing on nodes representing wires or registers, the model considers individual operations (like addition, multiplication, etc.) and their dependencies. This finer granularity allows DR-GNN to learn more nuanced relationships and patterns in circuit behavior. By combining static structural information with this dynamic, operator-level view derived from CDFGs, DR-GNN captures a far more complete picture of the RTL circuit’s functionality.

The development of DR-GNN represents a significant step towards creating GNN models that can truly understand and reason about complex digital circuits. This capability opens doors for advancements in areas like automated verification, performance optimization, and even design exploration – ultimately leading to faster, more reliable electronic systems. We’ve also built the first comprehensive dataset to train and evaluate DR-GNN, enabling rigorous testing of its capabilities and paving the way for future research in dynamic RTL learning.

Understanding Control Data Flow Graphs (CDFG)

Traditional methods for analyzing circuits often treat them as static entities – a snapshot in time. However, real circuits are constantly changing state as data moves through them over multiple clock cycles. To represent this dynamic behavior, we use something called a Control Data Flow Graph (CDFG). Think of it like a roadmap that shows not just what operations happen in a circuit, but also *when* they happen and how they depend on each other across different points in time.

A CDFG breaks down an RTL circuit’s operation into smaller steps (called operators) and visually connects them. Crucially, this connection isn’t just about immediate dependencies; it shows how the output of one operator at a certain clock cycle might be used as input to another operator several cycles later. This is what allows us to see the flow of data through time – something that simpler representations miss.

Existing Graph Neural Networks (GNNs) typically focus on just the static structure of a circuit, ignoring this crucial temporal dimension. Because they only look at one point in time, they can’t understand how operations influence each other across multiple clock cycles. DR-GNN’s use of CDFGs addresses this limitation directly by providing a graph that explicitly models these dynamic dependencies.

The Dynamic Circuit Dataset & Evaluation

A significant hurdle in advancing circuit representation learning has been the lack of suitable datasets capable of capturing dynamic behavior. Existing approaches largely focus on static circuit characteristics, leaving out vital runtime information essential for tasks like verification and optimization. To overcome this limitation, our work introduces a novel dataset specifically designed to train and evaluate models that incorporate dynamic RTL circuit behavior – the Dynamic Circuit Dataset. This dataset represents a substantial leap forward in scale and comprehensiveness, containing over 6300 distinct designs and an impressive 63,000 execution traces. Its creation marks the first resource of its kind for enabling research into truly dynamic circuit representation learning.

The generation of this ground truth data was a meticulous process, designed to provide models with rich, detailed information about circuit operation. Each design’s execution traces are built from simulations, capturing not only the static structure but also the sequence of operations performed across multiple clock cycles. This level of detail is paramount for effective training; it allows DR-GNN (our DynamicRTL-GNN model) to learn meaningful relationships between circuit elements and their runtime behavior. The dataset includes vital information such as branch hit predictions – crucial for understanding conditional execution paths – and toggle rate predictions, which provide insights into signal activity.

The sheer size of the Dynamic Circuit Dataset allows us to train DR-GNN with a robustness and accuracy previously unattainable. By incorporating over 6300 designs, we ensure that the learned representations generalize well across diverse circuit architectures and functionalities. This contrasts sharply with prior work which often relied on smaller datasets limiting their ability to capture the full spectrum of RTL circuit behavior. The creation of this dataset is therefore a key contribution, paving the way for future advancements in dynamic RTL learning and enabling more accurate and efficient circuit analysis tools.

Building the Ground Truth

To facilitate the development and evaluation of dynamic RTL learning models like our proposed DR-GNN, we constructed a novel dataset comprising over 6300 distinct RTL designs and approximately 630,000 execution traces. This dataset represents a significant expansion compared to existing resources, which primarily focus on static circuit analysis. The generation process involved executing each design with a diverse set of input stimuli, meticulously recording the control flow and data dependencies at the operator level within the Register Transfer Level (RTL) code.

The ground truth for training is derived directly from these execution traces. Specifically, we generate labels for two key prediction tasks: branch hit prediction – determining whether a particular branch will be taken during execution – and toggle rate prediction – estimating how often a signal will switch between logic states. These predictions are based on the observed behavior of each operator across all generated stimuli. The sheer volume of data allows for robust training, mitigating issues like overfitting that can plague models trained on smaller datasets.

The level of detail captured in this dataset is critical for effective dynamic RTL learning. By providing operators-level execution information and ground truth labels for branch hit and toggle rate prediction, we enable DR-GNN to learn nuanced representations that reflect circuit runtime behavior – a capability fundamentally lacking in models trained solely on static circuit structures. This detailed ground truth allows the model to understand the complex interplay of control flow and data dependencies within RTL circuits.

Beyond Prediction: Transfer Learning and Future Potential

The true power of dynamic RTL learning, as demonstrated by DR-GNN, extends far beyond simply improving prediction accuracy for a single task like defect classification. The learned representations – capturing both static circuit structure and dynamic execution behavior through the novel use of Control Data Flow Graphs (CDFG) – offer a versatile foundation for addressing a wide range of circuit design challenges. This shift from task-specific models to representation learning unlocks significant potential for transfer learning, allowing knowledge gained from one area to be applied effectively in others.

Consider power estimation: accurately predicting power consumption is vital throughout the design process. Current methods often rely on complex simulations or simplified approximations. DR-GNN’s learned representations, which inherently encode dynamic signal dependencies and execution patterns, could significantly improve the accuracy of power estimation models without requiring extensive simulation data. Similarly, assertion prediction – verifying that a circuit behaves as intended according to its specifications – benefits immensely from understanding runtime behavior; DR-GNN’s ability to capture this behavior provides valuable insights for automated verification.

Looking ahead, future research could explore integrating DR-GNN with formal verification techniques to enhance their effectiveness. Imagine using the learned representations to guide the search space in model checking or to generate targeted test cases that expose potential design flaws. Further, combining DR-GNN with other machine learning approaches, like reinforcement learning for circuit optimization, presents an exciting avenue for automating complex hardware design processes and achieving unprecedented levels of performance and efficiency.

Ultimately, DR-GNN represents a significant step towards more intelligent and adaptable hardware verification and optimization tools. By moving beyond static representations and embracing the dynamism inherent in RTL circuits, we pave the way for a future where machine learning plays an increasingly crucial role in accelerating the design and ensuring the reliability of complex electronic systems.

The Power of Representation

The core innovation of Dynamic RTL Learning (DRL) lies in the rich representations it generates. Unlike previous approaches that focus solely on static circuit structures, DR-GNN captures dynamic behavior by incorporating multi-cycle execution data through its operator-level Control Data Flow Graph (CDFG). This allows the learned representations to encode not just *what* a circuit is composed of, but also *how* it functions during runtime. These representations move beyond simple prediction tasks; they offer a foundational understanding of circuit operation that can be leveraged for a variety of downstream applications.

The potential for transfer learning with these DRL representations is significant. A model trained on one design or technology node could potentially inform the optimization and verification processes for another, drastically reducing development time and resource consumption. For example, learned features relating to data dependency patterns could be applied to power estimation – circuits exhibiting certain execution sequences are likely to consume more power – or used to predict assertion failures based on runtime behavior. This adaptability opens doors to solving diverse circuit design challenges without requiring task-specific retraining from scratch.

Looking forward, future research should explore methods for distilling these dynamic representations into even more compact and efficient forms suitable for deployment in resource-constrained environments. Investigating the combination of DRL with reinforcement learning could also unlock opportunities for automated circuit optimization, where the learned representations guide intelligent agents towards improved performance metrics. Ultimately, dynamic RTL learning promises to revolutionize hardware verification and optimization by providing a deeper, more nuanced understanding of circuit behavior.

The landscape of chip design is constantly evolving, demanding innovative approaches to optimize performance and efficiency. Our exploration of DynamicRTL-GNN reveals a powerful new direction in representing Register Transfer Level (RTL) designs, moving beyond static representations to capture the intricate relationships and dependencies within complex circuits. This shift allows for a more nuanced understanding of circuit behavior, paving the way for improved optimization techniques and automated design workflows. The ability to leverage dynamic RTL learning offers unprecedented opportunities to tackle challenges like power consumption, timing closure, and verification complexity. We’ve demonstrated how this approach significantly enhances graph neural network performance in various circuit analysis tasks, showcasing its potential as a foundational tool for future advancements. Ultimately, DynamicRTL-GNN represents a substantial leap forward in our ability to reason about and manipulate RTL designs, promising greater automation and higher quality results. To delve deeper into the methodology, experimental setup, and detailed findings, we invite you to explore the full research paper linked below. Consider how these insights could reshape your own design processes and contribute to the next generation of innovative circuit solutions.

We believe that embracing this dynamic perspective on RTL representation is crucial for staying ahead in a rapidly changing field. The implications extend beyond simply improving existing tools; it opens doors to entirely new possibilities in areas like hardware synthesis, formal verification, and even the design of specialized accelerators. By fostering a deeper understanding of circuit structure through dynamic RTL learning, we can unlock previously unattainable levels of optimization and automation. We hope this article has sparked your curiosity and highlighted the transformative potential of DynamicRTL-GNN for the future of circuit design. Please take some time to review the linked research paper; its detailed analysis offers a wealth of information that could inspire new approaches within your own work.

Continue reading on ByteTrending:

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.