The Problem with Single-Shot Fine-Tuning

Traditional approaches to fine-tuning generative AI models, often referred to as ‘single-shot’ or ‘one-and-done’ methods, present significant challenges for organizations striving for optimal performance. The process typically involves a large upfront investment: meticulously selecting training data, painstakingly configuring hyperparameters like learning rate and batch size, and then crossing your fingers that the resulting model aligns with your intended use case. This inherently risky approach treats fine-tuning as a singular event – a gamble where success relies heavily on initial guesses and often overlooks the power of incremental adjustments.

The core issue lies in the ‘black box’ nature of single-shot fine-tuning. It’s difficult to pinpoint *why* a model performs the way it does after a single training run. Without iterative feedback loops, understanding which data points contribute to errors or how specific hyperparameters influence behavior remains elusive. This lack of transparency makes debugging and optimization incredibly inefficient; you’re essentially flying blind, hoping to stumble upon the right combination through trial and error.

The consequences of this inefficiency are substantial. When a single-shot fine-tuning attempt fails – and it frequently does – organizations face the costly prospect of restarting the entire process from scratch. This means re-evaluating training data, reconfiguring hyperparameters, and reinvesting valuable time and resources into another potentially fruitless endeavor. The cumulative impact of these restarts can significantly delay project timelines and drain budgets, especially when dealing with large datasets or complex tasks.

Beyond the direct financial cost, consider the opportunity cost: the potential for more impactful projects sidelined while teams struggle to coax acceptable results from a stubbornly underperforming model. A shift towards iterative fine-tuning – allowing for continuous refinement based on feedback and performance metrics – offers a far more pragmatic and ultimately successful path to leveraging the power of models like those available through Amazon Bedrock.

Why Single-Shot Fails

Additional details forthcoming.

The Cost of Re-Starts

Additional details forthcoming.

Introducing Iterative Fine-Tuning on Bedrock

For many organizations venturing into generative AI, the promise of customized models often hits a roadblock with traditional single-shot fine-tuning approaches. Imagine meticulously crafting your training data, painstakingly configuring hyperparameters, and then… disappointment. Single-shot fine-tuning leaves you hoping for the best, lacking the agility to make targeted adjustments when things don’t quite align with your desired outcome. This can mean wasted time, resources, and ultimately, suboptimal model performance – forcing a complete restart of the entire training process. Thankfully, there’s a smarter way: iterative fine-tuning.

Iterative fine-tuning represents a paradigm shift in how we approach model customization. Unlike its single-shot counterpart, it’s built on a cycle of incremental adjustments based on continuous feedback and evaluation. You begin with an initial fine-tuned model, assess its performance against your specific use case, identify areas for improvement, then make small, targeted changes to the training data or hyperparameters. This process repeats – iterate – allowing you to progressively refine the model’s behavior with a level of precision simply unattainable through a one-and-done approach. It’s about guided evolution rather than hoping for a perfect outcome on the first try.

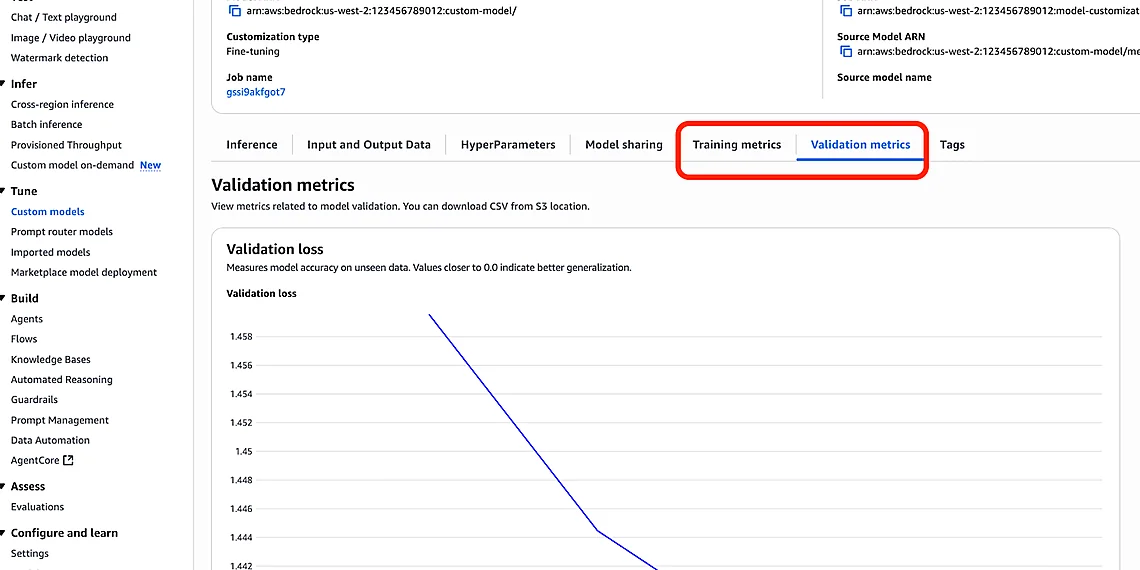

Amazon Bedrock is dramatically simplifying this powerful technique. Its managed environment and suite of tools abstract away much of the underlying complexity typically associated with iterative fine-tuning. Bedrock handles infrastructure, versioning, and experiment tracking, allowing data scientists to focus squarely on refining model behavior through controlled adjustments. This means faster experimentation cycles, reduced risk, and ultimately, a more efficient path to achieving truly customized generative AI solutions – all without needing deep expertise in the intricacies of distributed training or complex deployment pipelines.

In essence, iterative fine-tuning on Bedrock offers unparalleled flexibility and control over your AI models. It’s not just about achieving better results; it’s about empowering your team with a more robust, adaptable, and ultimately sustainable approach to generative AI development.

What is Iterative Fine-Tuning?

Additional details forthcoming.

Bedrock’s Role in Simplification

Additional details forthcoming.

Key Benefits & Strategic Advantages

Traditional generative AI deployments often stumble when relying on single-shot fine-tuning – a process where you select data, configure parameters, and cross your fingers for acceptable results. This ‘set it and forget it’ approach frequently leads to models that underperform expectations, forcing teams to restart the entire training cycle from square one whenever adjustments are needed. Iterative fine-tuning with Amazon Bedrock flips this paradigm on its head. By embracing a cyclical process of evaluation, adjustment, and retraining, you move beyond guesswork and into a realm of predictable, measurable improvement for your AI models.

The most significant advantage of iterative Bedrock fine-tuning lies in the dramatic increase in model accuracy and relevance to specific business needs. Instead of hoping a one-time training run captures all nuances of your data, each iteration allows you to pinpoint areas where the model struggles and target those with precisely tailored adjustments. This granular control leads to outputs that are more accurate, contextually appropriate, and aligned with your desired outcomes – whether it’s generating marketing copy, summarizing legal documents, or powering a customer service chatbot.

Beyond improved accuracy, iterative fine-tuning dramatically reduces overall development time. The ability to quickly evaluate model performance after each iteration and make targeted changes accelerates the learning process significantly. Rather than discarding entire training runs that prove unsatisfactory, developers can identify specific areas for improvement and focus their efforts accordingly. This agile approach minimizes wasted resources and allows teams to deploy high-performing models much faster.

Finally, iterative fine-tuning on Bedrock offers a level of control and transparency often lacking in single-shot methods. Each iteration provides valuable insights into the model’s decision-making process, allowing developers to understand *why* certain outputs are generated and proactively influence its behavior. This increased visibility not only builds confidence in your AI solutions but also facilitates easier debugging and ongoing optimization – ensuring your models remain effective and aligned with evolving business requirements.

Improved Model Accuracy

Additional details forthcoming.

Reduced Development Time

Additional details forthcoming.

Enhanced Control & Transparency

Additional details forthcoming.

Getting Started with Iterative Fine-Tuning

Content forthcoming.

Data Preparation Best Practices

Additional details forthcoming.

Leveraging Bedrock’s Evaluation Tools

Additional details forthcoming.

Source: Read the original article here.

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.