Analyzing complex data like software vulnerabilities often requires leveraging information from various sources – a concept known as multimodal data analysis. Designing effective multimodal graph neural networks (MGNNs) to handle this complexity can be incredibly challenging, however. Recent research presented in arXiv:2510.07325 introduces MACC-MGNAS, a novel framework that automates the design process, significantly improving both accuracy and efficiency. This innovative approach promises to revolutionize how we analyze vulnerabilities by optimizing multimodal data integration.

Understanding the Challenges of Multimodal Data Integration

Many real-world problems involve integrating data from different sources, such as text, images, numerical values, and structured reports. Each of these ‘modalities’ provides unique insights that contribute to a more complete understanding. For example, when analyzing software vulnerabilities, code snippets represent textual data, network traffic logs offer numerical information, and security reports provide structured details. Multimodal graph neural networks are designed specifically to combine these diverse signals effectively.

The Difficulty of Manual MGNN Design

Traditionally, manually designing an MGNN architecture that seamlessly integrates these modalities has proven exceptionally difficult. This process demands careful coordination of specialized components for each modality at every layer of the network; therefore, it’s a task prone to errors and requires significant expertise. Furthermore, optimizing the interactions between different modalities is crucial for achieving high performance.

Introducing MACC-MGNAS: A Cooperative Co-Evolution Framework

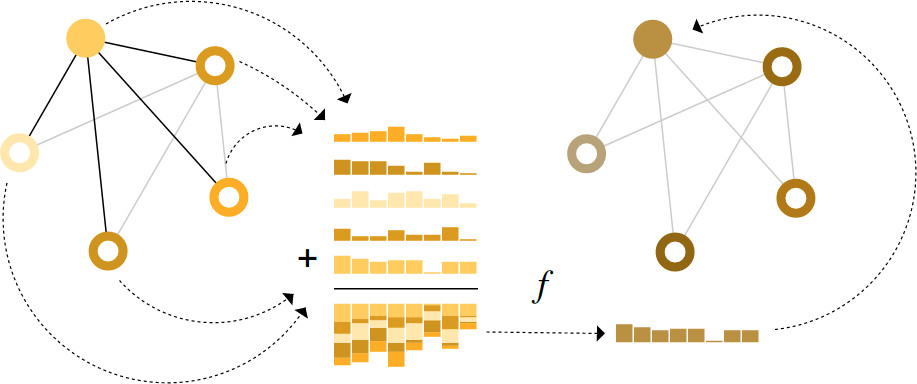

The new research introduces MACC-MGNAS as an automated architecture search (GNAS) solution based on genetic algorithms to address this challenge. Notably, existing GNAS methods often struggle because they primarily focus on single modalities and fail to account for the crucial interplay between different data types. MACC-MGNAS incorporates a ‘modality-aware cooperative co-evolution’ (MACC) framework as its core innovation.

How MACC Works: A Divide-and-Conquer Strategy

The MACC framework employs a ‘divide and conquer’ strategy, partitioning the search process into modality-specific groups. This allows for independent evolution of components tailored to each individual data type. Subsequently, a central ‘coordinator’ reassembles these components for joint evaluation, ensuring that the overall architecture is optimized for multimodal integration.

- Modality Partitioning: Enables parallel optimization of components specific to each data modality.

- Coordinated Evaluation: Ensures holistic performance across all modalities within the integrated network.

Efficiency Enhancements: Surrogate Models and Diversity Maintenance

To further accelerate the search process and enhance its efficiency, MACC-MGNAS incorporates two additional significant innovations. These optimizations help to reduce computational costs while maintaining high accuracy in multimodal graph network design.

Leveraging Surrogate Models for Faster Evaluation

The framework utilizes a ‘Modality-Aware Dual-Track Surrogate’ (MADTS) technique. This employs a surrogate model to estimate the performance of candidate architectures, substantially reducing the need for expensive full evaluations. As a result, computational resources are conserved, and the overall search time is significantly reduced.

Maintaining Diversity for Robust Solutions

Furthermore, MACC-MGNAS incorporates a ‘Similarity-Based Population Diversity Indicator’ (SPDI). This strategy dynamically balances exploration (trying new configurations) and exploitation (refining existing solutions), preventing premature convergence on suboptimal architectures. Consequently, the framework explores a wider range of potential designs to identify truly effective multimodal solutions.

Results and Impact: Demonstrating Superior Performance

In rigorous testing using the VulCE vulnerability dataset, MACC-MGNAS achieved an impressive F1-score of 81.67%, surpassing existing state-of-the-art methods by a substantial margin (an improvement of 8.7%). Importantly, it accomplished this feat in just 3 GPU-hours and reduced computation costs by 27%. This demonstrates the framework’s significant potential to streamline vulnerability analysis and improve overall security defenses, showcasing its effectiveness as a powerful tool for handling complex multimodal data.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.