Neural networks are revolutionizing fields from image recognition to natural language processing. But how can we further optimize their performance and efficiency? A fascinating research paper on Distill Pub reveals a surprising phenomenon: when a neural network layer is divided into multiple branches, neurons exhibit self-organization into coherent groupings. This article explores this concept of branchspecialization, delving into the implications for future network design.

Understanding Branch Specialization

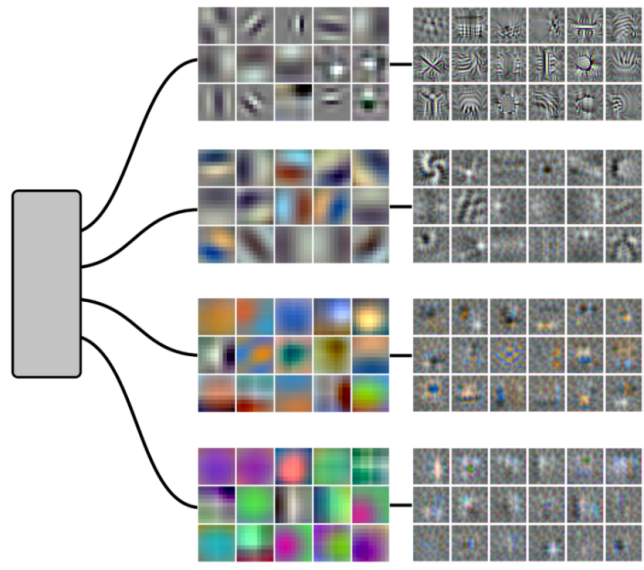

The core idea behind branchspecialization is relatively simple: instead of a single layer processing all input data, the layer is split into multiple branches. Each branch receives a portion of the input and processes it independently. Initially, these branches are random. However, during training, a remarkable pattern emerges – neurons within each branch begin to specialize in responding to specific features or patterns in the data.

This specialization isn’t explicitly programmed; it arises organically as the network learns. For example, think of it like different departments within a company, each handling a particular area of expertise. The branches essentially create micro-networks within the larger network, allowing for more modular and potentially efficient processing. Furthermore, this approach offers unique opportunities to enhance neural network architectures.

How Branches Lead to Specialization

One key factor driving this specialization is likely the interplay between competition and cooperation among neurons. Neurons within a branch compete for activation signals, while branches themselves may cooperate to achieve a broader goal. Consequently, only those neurons that are best suited to respond to specific features remain active.

Visualizing the Process

The Distill Pub article provides compelling visualizations demonstrating this self-organization. They use techniques like dimensionality reduction (t-SNE) to project high-dimensional neuron activations into 2D space. These visual representations clearly show clusters of neurons within each branch, indicating a high degree of coherence in their responses and providing strong evidence for the effectiveness of branchspecialization.

The Benefits of Branching Out

So why is this self-organization beneficial? Several key advantages have been observed. As a result, exploring this concept could unlock new possibilities in deep learning research.

Efficiency Gains with Specialized Branches

Increased efficiency is a major benefit. Specialization reduces redundancy; neurons in different branches don’t need to learn the same things, leading to more efficient use of parameters and computational resources. Similarly, it can lead to reduced training times and lower energy consumption.

Improving Interpretability Through Modular Design

The distinct roles of each branch can also make it easier to understand what the network is doing. Identifying which features trigger activity in a particular branch provides insights into its function; this enhances interpretability and allows for more targeted debugging efforts. Notably, this makes branchspecialization valuable for Explainable AI (XAI) initiatives.

Robustness and Sparsity

If one branch fails or encounters noisy data, other branches can compensate, making the network more robust. Additionally, branchspecialization encourages sparsity – many neurons may remain inactive for specific inputs, further reducing computational cost because only relevant branches are activated.

Visualizing Neuron Coherence

Future Directions & Applications

The findings on branchspecialization open up exciting avenues for future research and offer substantial advantages over traditional network architectures. Researchers are exploring how to actively guide this self-organization process, potentially leading to even greater performance gains. Some potential applications include:

Automated Network Design

Branching could be incorporated into automated design algorithms to create more efficient and specialized networks; this would streamline the development process and improve network performance.

Modular Deep Learning Architectures

Designing neural networks as collections of interacting branches, similar to how biological brains are structured. This modular approach can improve scalability and maintainability.

Explainable AI (XAI) Advancements

Leveraging branchspecialization to improve the interpretability of complex models. Understanding which parts of the network activate for a given input allows for easier debugging and explanation, fostering trust and transparency in AI systems.

Conclusion

Branch specialization represents a fascinating and potentially transformative development in neural network design. By encouraging neurons to self-organize into coherent groupings, this technique offers the promise of improved efficiency, interpretability, and robustness. As research continues to explore its implications, we can expect to see exciting new applications emerge, pushing the boundaries of what’s possible with deep learning.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.