Online AI tools are getting ridiculously good, but as soon as you start using them, you’ll realize that their subscription costs quickly add up. The free versions are often limited in access and frequently only provide access to less capable models. Fortunately, the emergence of local ai models offers a compelling alternative.

The Rise of Local AI Models

For a while, cloud-based ai services like OpenAI’s ChatGPT were essentially the only viable option for most users. However, the landscape has shifted dramatically with the advent of local ai models. These are ai models that run directly on your computer, eliminating the need to connect to external servers.

What Are Local AI Models?

Local ai models are essentially downloadable files containing pre-trained machine learning algorithms. They’re typically smaller and more efficient than their cloud-based counterparts, designed to run on consumer hardware. Popular examples include Llama 2, Mistral, and others available through platforms like Ollama and LM Studio. Consequently, users benefit from a decentralized approach to artificial intelligence.

Why Choose Local?

- Cost Savings: No subscription fees! Once you download the model, it’s yours to use indefinitely. Furthermore, this can lead to substantial savings over time.

- Privacy: Your data stays on your device. This is a huge advantage for sensitive information or those concerned about data security; notably, privacy is a growing concern for many users.

- Offline Access: Work with ai even without an internet connection. For example, this is invaluable when traveling or in areas with unreliable connectivity.

- Customization: Some models allow fine-tuning, letting you adapt them to specific tasks; as a result, local AI offers greater flexibility.

- Speed: While performance depends on your hardware, local models can often feel faster due to reduced latency from server communication.

Getting Started with Local AI

Setting up a local ai model is surprisingly straightforward now. Several user-friendly tools have emerged to simplify the process.

Recommended Tools

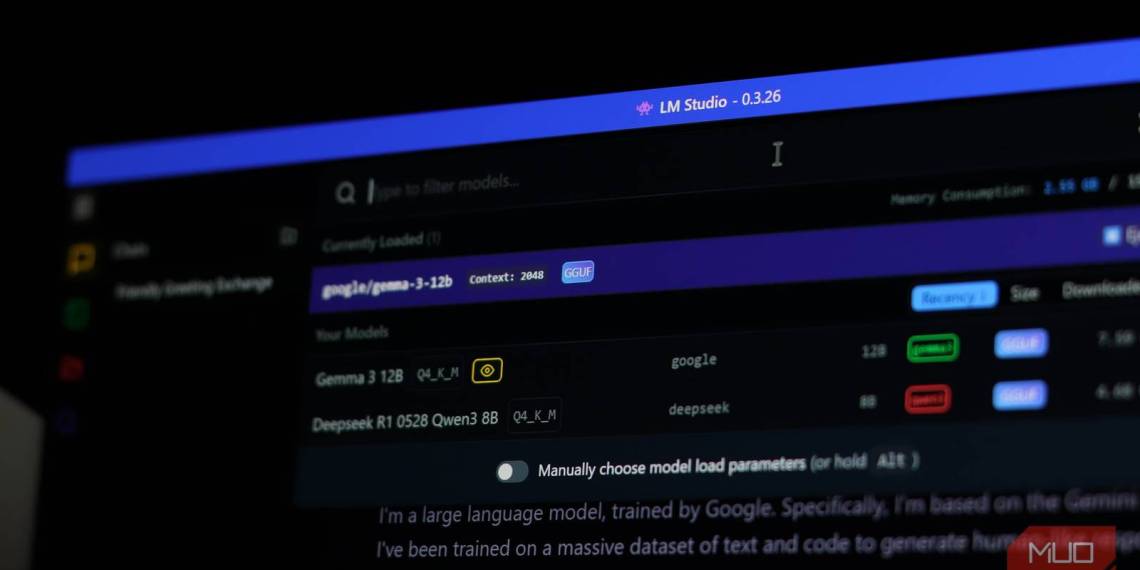

- Ollama: A simple command-line tool for downloading and running various models.

- LM Studio: Offers a graphical interface, making it accessible even for non-technical users. It handles model downloads, configuration, and execution; therefore, it’s an excellent choice for beginners.

- GPT4All: Another easy-to-use option with a focus on privacy and offline capabilities.

The process generally involves downloading the tool of your choice, selecting a model from their available libraries (often categorized by size and capability), and then running it. It’s a relatively simple process that democratizes access to ai.

Comparing Cloud vs. Local AI

While cloud-based ai still holds advantages in certain areas (access to cutting-edge models, massive computational power for training), the gap is rapidly closing. Here’s a quick comparison:

| Feature | Cloud AI | Local AI |

|---|---|---|

| Cost | Subscription fees | Free (after initial download) |

| Privacy | Data sent to external servers | Data stays on your device |

| Internet Required | Yes | No |

| Model Access | Access to the latest models (potentially) | Limited to available downloads, but constantly expanding |

The Future is Local

The rise of local ai marks a significant shift in how we interact with artificial intelligence. The combination of improved hardware and increasingly accessible models means that powerful ai capabilities are now within reach for anyone, without the recurring costs or privacy concerns associated with cloud-based services. I’ve personally stopped paying for online AI tools because the quality and accessibility of local solutions have become too good to ignore.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.