Organizations are increasingly seeking a competitive edge through rapid deployment and integration of generative AI models. However, the proliferation of foundation models (FMs), each with unique specifications and operational needs, creates significant challenges. A Generative AI Gateway architecture addresses this by providing a unified interface, abstracting away these differences behind a consistent API. This allows for streamlined access to multiple FMs without building and maintaining separate integrations.

Recently, the AWS Generative AI Innovation Center and Quora collaborated on an innovative solution – a unified wrapper API framework that accelerates the deployment of Bedrock FMs on Quora’s Poe system. This approach delivers a “build once, deploy multiple models” capability, substantially reducing deployment time and engineering effort. The resulting architecture showcases visible protocol bridging code throughout the codebase.

For technology leaders and developers working with AI multi-model deployments at scale, this framework demonstrates how thoughtful abstraction and protocol translation can accelerate innovation cycles while maintaining operational control. Ultimately, utilizing Bedrock allows for faster iteration and greater flexibility in leveraging generative AI capabilities.

Understanding Quora’s Poe & Amazon Bedrock

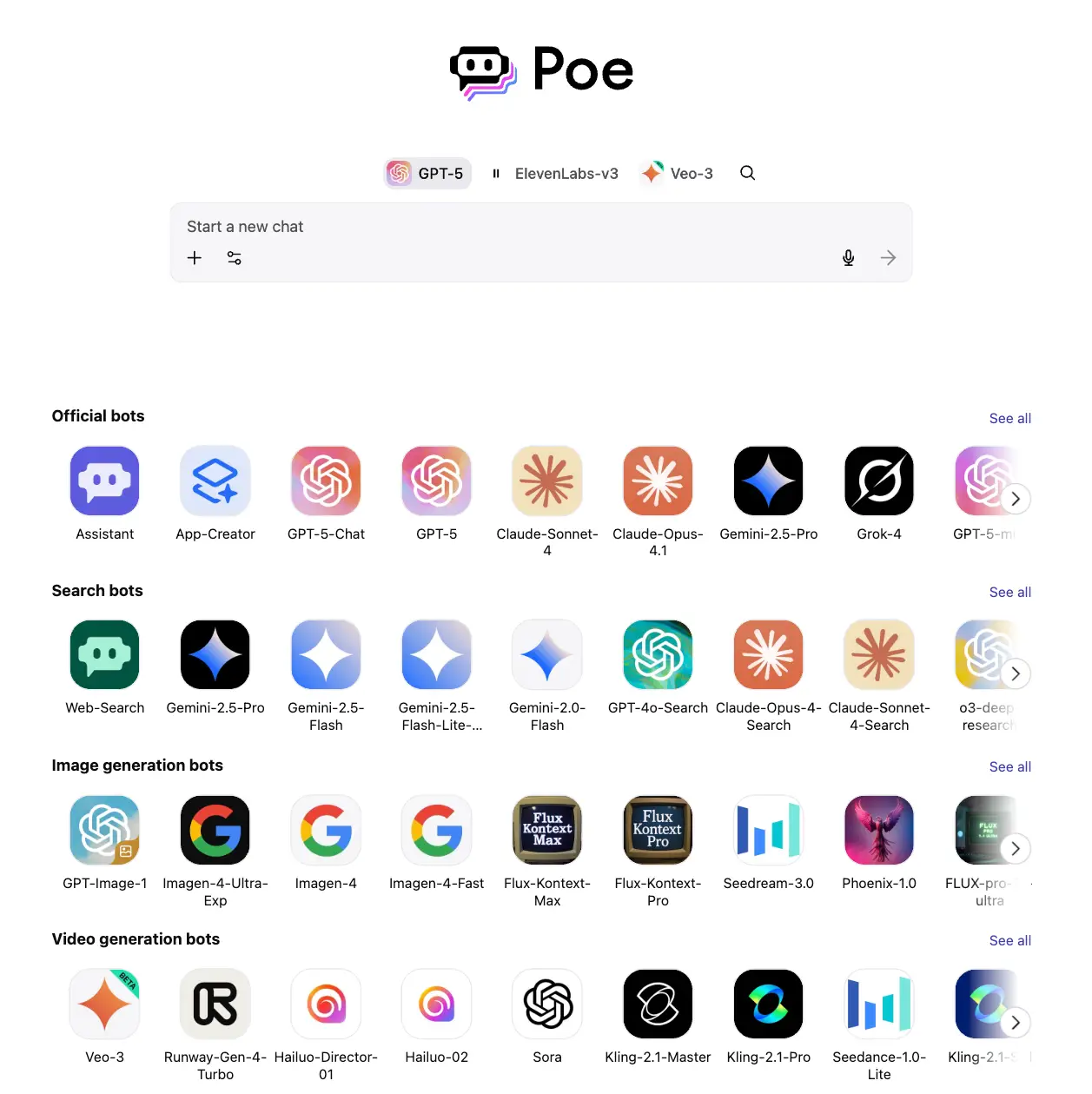

Poe is an AI system developed by Quora that provides users and developers access to a wide range of advanced AI models and assistants from various providers. Notably, it offers multi-model access, enabling side-by-side conversations with different chatbots for tasks like natural language understanding and content generation. Poe’s strength lies in its ability to unify the experience across diverse foundational technologies.

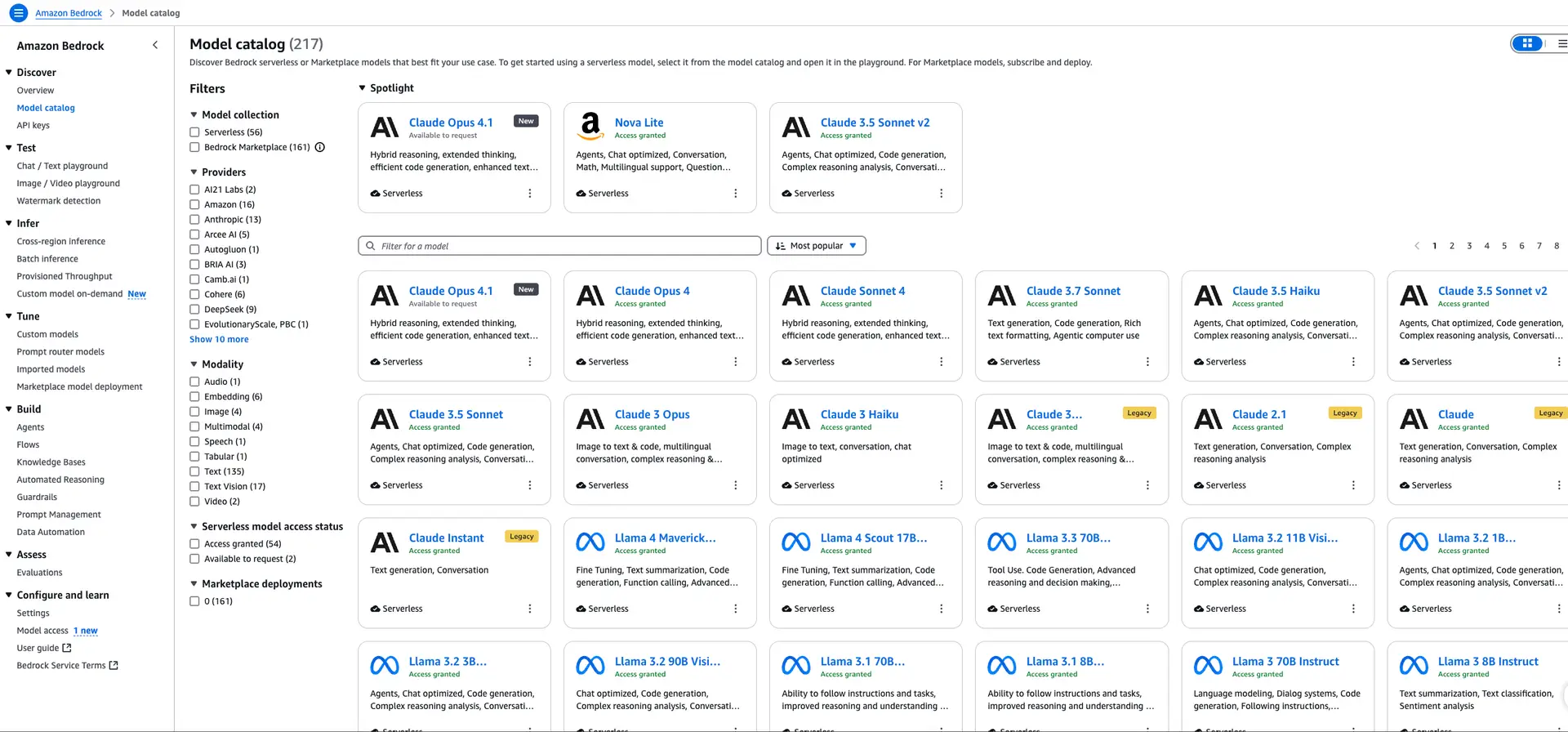

In contrast, Bedrock is a fully managed service from Amazon Web Services (AWS) offering access to a diverse range of foundation models. This catalog acts as a central hub for developers to discover, evaluate, and integrate state-of-the-art AI from various providers. Combining these two platforms creates a powerful synergy.

Key Differences in Communication Protocols

One significant challenge was bridging Poe’s event-driven ServerSentEvents protocol with Bedrock’s REST-based APIs. A crucial architectural component addressed this by implementing a protocol translation layer, effectively converting ServerSentEvents into HTTP requests that Bedrock can understand. Furthermore, the system employs template-based configurations to simplify model deployments.

The Power of Template-Based Configuration

The implementation leverages a template-based configuration system that dramatically reduces deployment time. Consequently, integrating new Bedrock models has been reduced from days to just 15 minutes. This efficiency allows for faster experimentation and quicker adaptation to evolving AI landscapes.

Delving into Implementation Patterns & Benefits

Beyond the core architecture, several implementation patterns emerged as crucial for success. For instance, centralized error handling ensures consistent error messages and simplifies debugging across different Bedrock FMs. In addition, the framework seamlessly supports multi-modal capabilities, enabling Poe to process text, images, and videos.

The benefits are substantial. This unified approach fosters code reusability, significantly reduces maintenance overhead, and empowers developers to quickly adapt to new releases of Bedrock models. As a result, the “build once, deploy multiple models” paradigm truly unlocks the potential for generative AI at scale within Quora’s Poe system.

Conclusion

The collaboration between the AWS Generative AI Innovation Center and Quora demonstrates a pragmatic approach to managing complexity in multi-model deployments. By abstracting away protocol differences and employing template-based configurations, they’ve created a flexible and efficient architecture that accelerates innovation. This framework serves as a valuable blueprint for organizations aiming to harness the full power of generative AI while maintaining operational control and reducing engineering burden.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.