Revolutionizing LLM Inference with Speculative Cascades

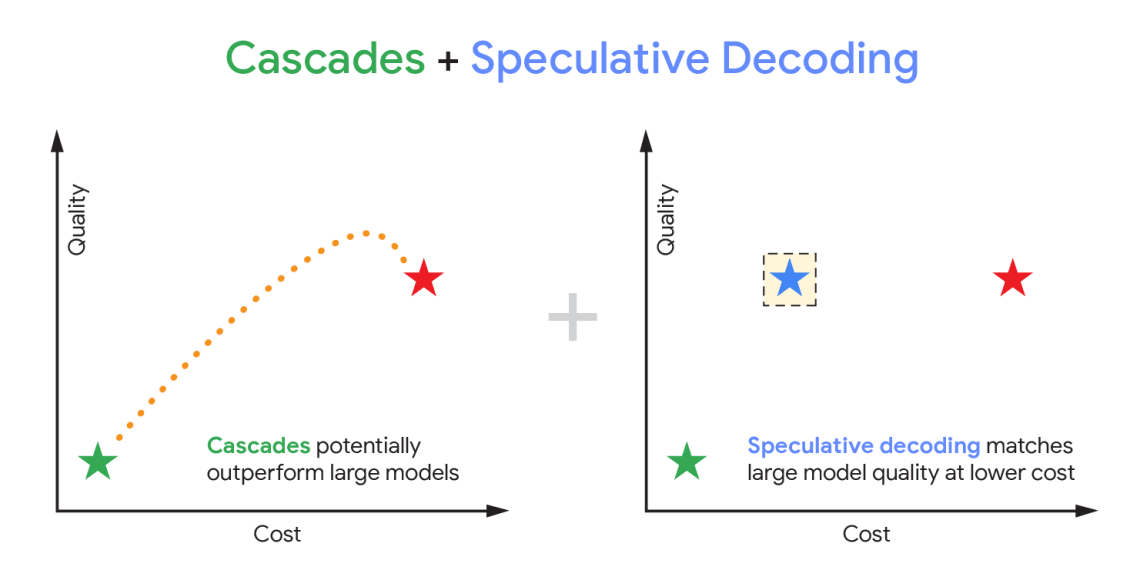

Large Language Models (LLMs) are rapidly transforming numerous applications, but their computational demands present a significant bottleneck for wider adoption. Google Research has introduced “Speculative Cascades,” a novel hybrid decoding technique designed to drastically reduce latency and improve throughput without compromising quality. This innovative approach combines speculative decoding with a cascaded architecture, marking a substantial step towards more efficient LLM inference and making these powerful models more accessible.

Understanding the Challenge: Inference Latency

The primary challenge lies in the sequential nature of text generation; each token generated by an LLM depends on all preceding tokens, necessitating a step-by-step processing approach. Consequently, this creates considerable latency, especially when dealing with longer sequences and complex prompts. Traditionally, methods such as quantization and pruning have offered some improvements but often at the expense of accuracy. Speculative decoding presents a promising alternative; however, it also introduces its own unique challenges.

How Speculative Cascades Works: A Two-Tiered System

Speculative Cascades employs a two-tiered system to address these issues. The first tier, referred to as the “speculator,” is a smaller, faster model that rapidly predicts k potential next tokens based on the current context. These speculative outputs are then passed to the second tier, the “verifier,” which is the full LLM itself. The verifier evaluates these speculations and selects the most probable token; if the speculation proves correct, it avoids a complete forward pass through the large model, saving substantial time. For example, this process significantly reduces the computational burden associated with generating longer text sequences.

- Speculation Phase: The smaller “speculator” model swiftly generates k candidate tokens.

- Verification Phase: The larger LLM (“verifier”) assesses the likelihood of each speculative token.

- Cascaded Evaluation: When the verifier agrees with a speculation, inference proceeds quickly; conversely, disagreements trigger a full forward pass through the large model for that specific token.

A key innovation within Speculative Cascades is its “cascading” aspect. The verifier’s confidence score dynamically guides subsequent speculative rounds; higher confidence allows for larger batches of speculative tokens to be processed concurrently, which further boosts throughput and overall efficiency in LLM operation.

Key Benefits and Results

- Reduced Latency: Speculative Cascades demonstrably reduces inference latency by up to 3x compared to standard decoding methods, a significant performance improvement for LLMs.

- Increased Throughput: The increased speed directly translates into higher throughput—the ability to process more requests per unit of time – making it ideal for high-demand applications.

- Minimal Quality Impact: Importantly, the approach maintains comparable output quality to traditional decoding techniques, minimizing any potential trade-offs in accuracy when accelerating LLM inference.

Google’s research team rigorously tested Speculative Cascades on various LLMs, including PaLM 2, and observed consistent performance gains across different model sizes. The technique proves particularly effective for models with a large number of parameters, where the benefits of speculative decoding are amplified.

Future Directions & Implications

The development of Speculative Cascades represents a substantial advancement in LLM inference optimization. Furthermore, future research will likely concentrate on several key areas to further enhance its capabilities. For example, improving the accuracy of the speculatormodel is crucial to minimize verification failures and maximize overall efficiency. Additionally, dynamic batching strategies that adapt batch sizes based on verifier confidence can optimize performance across a diverse range of workloads.

- Improving Speculator Accuracy: Efforts will continue to enhance the smaller model’s predictive capabilities to reduce verification failures and maximize efficiency in LLM operations.

- Dynamic Batching: Adapting batch sizes dynamically, based on verifier confidence, is essential for achieving optimal performance across a wide spectrum of workloads involving large language models.

- Hardware Acceleration: Designing specialized hardware architectures tailored to exploit the inherently parallel nature of speculative decoding could unlock even greater performance gains.

This hybrid approach promises to unlock new possibilities for deploying LLMs in latency-sensitive applications, such as real-time chatbots and interactive AI assistants.

Conclusion

Speculative Cascades offers a compelling solution to the increasingly pressing challenge of LLM inference latency. By cleverly combining speculative decoding with cascaded verification, Google Research has paved the way for faster, more efficient, and ultimately more accessible generative AI experiences. This technique is poised to have a significant impact on how we interact with and deploy large language models in the future—ultimately accelerating innovation across numerous industries.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.