For many of us, artificial intelligence (AI) has become part of everyday life, and the rate at which we assign previously human roles to AI systems shows no signs of slowing down. AI systems are crucial ingredients in technologies like self-driving cars, smart urban planning, and digital assistants across a growing number of domains. At their core are autonomous agents—systems designed to act on behalf of humans and make decisions without direct supervision. To act effectively in the real world, these agents must be capable of carrying out a wide range of tasks despite potentially unpredictable environmental conditions, which often requires machine learning (ML) for achieving adaptive behavior.

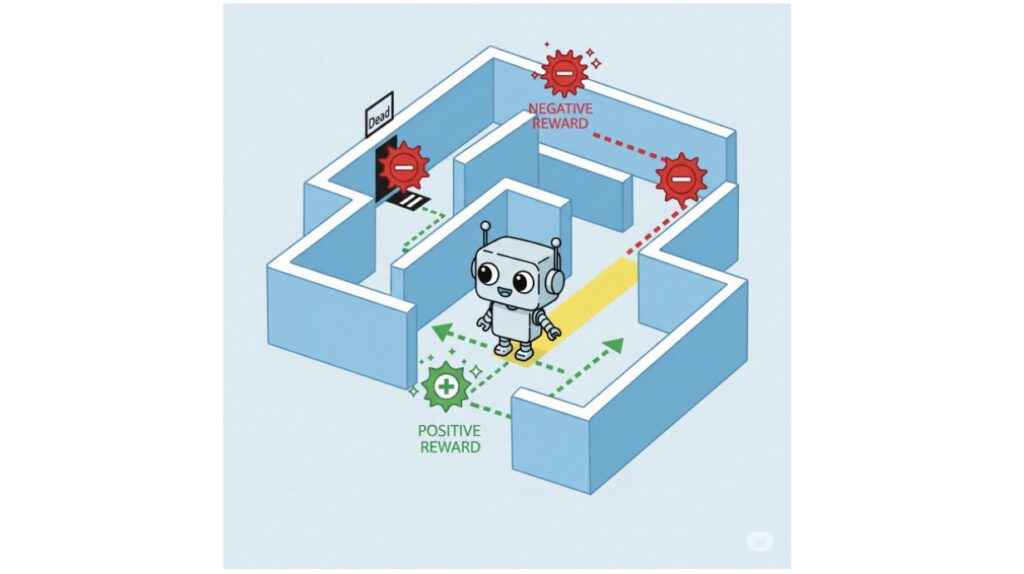

Reinforcement learning (RL) is a powerful ML technique for training agents to achieve optimal behavior in stochastic environments. RL agents learn by interacting with their environment: every action yields context-specific rewards or penalties. Over time, they learn behaviors that maximize the expected rewards throughout runtime.

RL agents can master complex tasks, from winning video games to controlling cyber-physical systems like self-driving cars, often surpassing human capabilities. However, if left unconstrained, this efficient behavior might be off-putting or even dangerous, which motivates research into safe RL—techniques ensuring agents meet specific safety requirements. These are often expressed in formal languages like linear temporal logic (LTL), extending classical logic with temporal operators, allowing us to specify conditions such as “something that must always hold”. By combining ML adaptability with logic precision, researchers have developed powerful methods for training agents to act both effectively and safely.

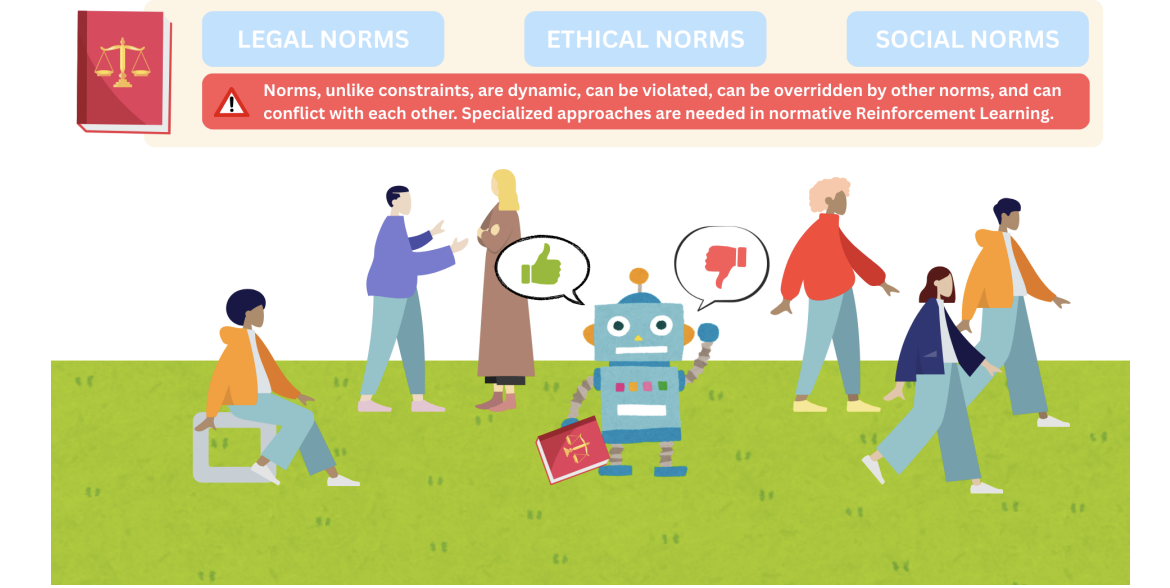

However, safety isn’t everything. As RL-based agents increasingly replace or interact with humans, a new challenge arises: ensuring their behavior complies with social, legal, and ethical norms structuring human society. For example, a self-driving car might perfectly follow safety constraints (avoiding collisions), yet adopt behaviors violating social norms—appearing bizarre or rude on the road, potentially causing other drivers to react unsafely.

Norms are expressed as obligations (“you must do it”), permissions (“you are permitted to do it”), and prohibitions (“you are forbidden from doing it”). These aren’t true/false statements but deontic concepts describing what is right, wrong, or permissible—ideal behavior instead of the actual case. This nuance introduces complex dynamics to reasoning about norms.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.