– “How much will it cost to run our chatbot on Amazon Bedrock?” This is one of the most frequent questions we hear from customers exploring AI solutions. And it’s no wonder — calculating costs for AI applications can feel like navigating a complex maze of tokens, embeddings, and various pricing models. Whether you’re a solution architect, technical leader, or business decision-maker, understanding these costs is crucial for project planning and budgeting. In this post, we’ll look at Amazon Bedrock pricing through the lens of a practical, real-world example: building a customer service chatbot. We’ll break down the essential cost components, walk through capacity planning for a mid-sized call center implementation, and provide detailed pricing calculations across different foundation models. By the end of this post, you’ll have a clear framework for estimating your own Amazon Bedrock implementation costs and understanding the key factors that influence them.

For those that aren’t familiar, Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading artificial intelligence (AI) companies like AI21 Labs, Anthropic, Cohere, Meta, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI. This service provides a powerful and versatile platform for developing sophisticated AI-powered chatbots. Let’s delve into the intricacies of Amazon Bedrock pricing and discover how it can be effectively utilized to power your chatbot assistant.

Amazon Bedrock provides a comprehensive toolkit for powering AI applications, including pre-trained large language models (LLMs), Retrieval Augmented Generation (RAG) capabilities, and seamless integration with existing knowledge bases. This powerful combination enables the creation of chatbots that can understand and respond to customer queries with high accuracy and contextual relevance. The core principle behind understanding these costs is recognizing that they’re built around three primary factors: input tokens, output tokens, and model selection. The number of tokens processed by your chatbot directly impacts cost, as LLMs are priced based on token usage.

Solution overview

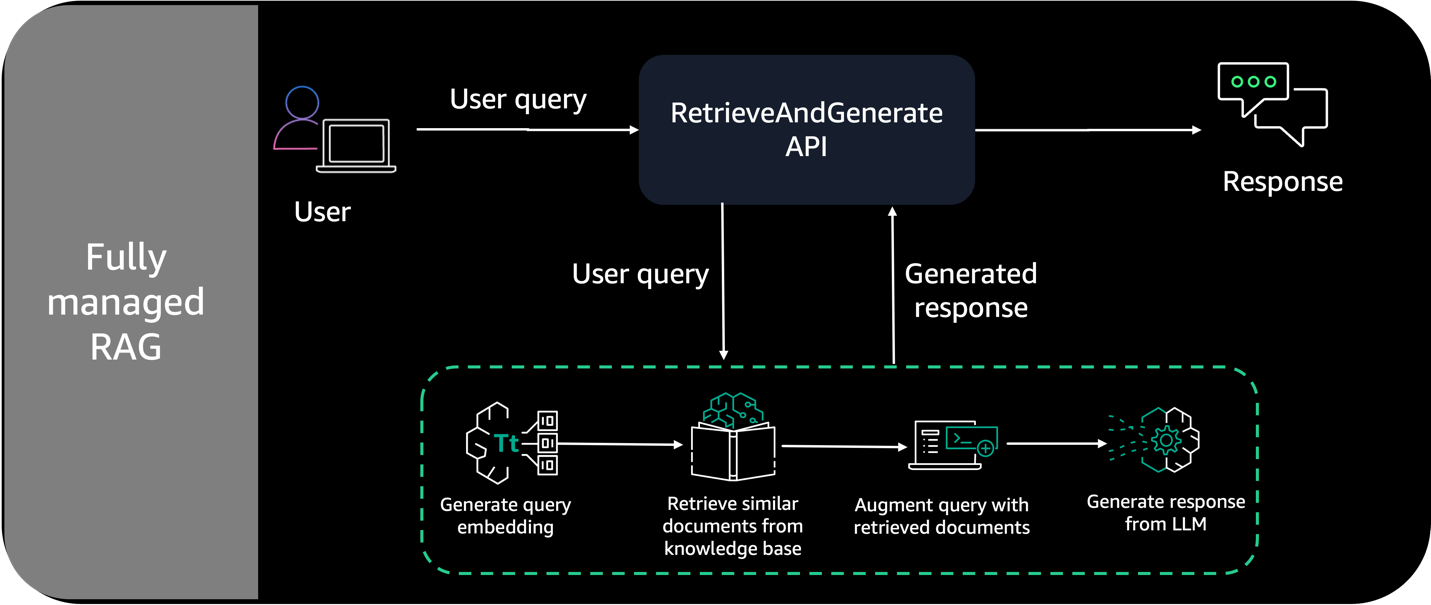

For this example, our Amazon Bedrock chatbot will use a curated set of data sources and use Retrieval-Augmented Generation (RAG) to retrieve relevant information in real time. With RAG, our output from the chatbot will be enriched with contextual information from our data sources, giving our users a better customer experience. When understanding Amazon Bedrock pricing, it’s crucial to familiarize yourself with several key terms that significantly influence the expected cost. These components not only form the foundation of how your chatbot functions but also directly impact your pricing calculations. Let’s explore these key components. Key Components

- Data Sources – The documents, manuals, FAQs, and other information artifacts that form your chatbot’s knowledge base.

- Retrieval-Augmented Generation (RAG) – The process of optimizing the output of a large language model by referencing an authoritative knowledge base outside of its training data sources before generating a response. RAG extends the already powerful capabilities of LLMs to specific domains or an organization’s internal knowledge base, without the need to retrain the model. It is a cost-effective approach to improving LLM output so it remains relevant, accurate, and useful in various contexts.

- Tokens – A sequence of characters that a model can interpret or predict as a single unit of meaning. For example, with text models, a token could correspond not just to a word, but also to a part of a word with grammatical meaning (such as “-ed”), a punctuation mark (such as “?”), or a common phrase (such as “

Let’s consider a scenario where you’re building a customer service chatbot for an e-commerce company. This chatbot will need to access product information, order history, and FAQs to answer customer inquiries effectively. The RAG component will be essential here, allowing the chatbot to dynamically retrieve relevant details from your knowledge base based on the customer’s query. This ensures that the chatbot provides accurate and up-to-date information, enhancing the overall user experience. The pricing model for Amazon Bedrock is typically structured around two main components: per-token charges for input and output processing, and instance costs for running the models.

| Cost Component | Description |

|---|---|

| Input Tokens | The number of tokens in your prompts or queries sent to the model. |

| Output Tokens | The number of tokens generated by the model as a response. |

| Model Instance Cost | The cost of running the underlying foundation model, which varies depending on the model size and capabilities. |

To illustrate, let’s estimate the monthly costs for a chatbot with moderate usage: Assume an average daily chat volume of 1000 conversations, each lasting 2 minutes (approximately 1400 tokens input/output). Using a mid-range foundation model, we can estimate the cost to be approximately $50-$200 per month. This calculation is based on the token pricing structure and instance costs. However, it’s crucial to note that this is just an estimate, and the actual cost may vary depending on your specific use case and usage patterns. Continuous monitoring of your chatbot’s performance and costs is recommended to identify areas for optimization.

Furthermore, Amazon Bedrock offers various foundation models with different capabilities and pricing tiers. For example, a smaller, more efficient model might be sufficient for simple customer service inquiries, while a larger model could handle more complex tasks like product recommendations or generating marketing copy. Careful selection of the appropriate model is crucial for optimizing costs.

In conclusion, understanding Amazon Bedrock pricing requires careful consideration of several factors, including token usage, model selection, and instance costs. By implementing robust monitoring strategies and continuously optimizing your chatbot’s performance, you can effectively manage costs and maximize the value of this powerful AI service. Remember that scalability is a key benefit; as your chatbot’s usage grows, Amazon Bedrock allows you to seamlessly scale up your resources to meet increased demand. This makes it an ideal solution for businesses looking to rapidly deploy and expand their AI-powered applications.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.