Kubernetes Events provide crucial insights into cluster operations, but as clusters grow, managing and analyzing these events becomes increasingly challenging. This blog post explores how to build custom event aggregation systems that help engineering teams better understand cluster behavior and troubleshoot issues more effectively.

## The challenge with Kubernetes events

In a Kubernetes cluster, events are generated for various operations – from pod scheduling and container starts to volume mounts and network configurations. While these events are invaluable for debugging and monitoring, several challenges emerge in production environments:

- Volume: Large clusters can generate thousands of events per minute

- Retention: Default event retention is limited to one hour

- Correlation: Related events from different components are not automatically linked

- Classification: Events lack standardized severity or category classifications

- Aggregation: Similar events are not automatically grouped

To learn more about Events in Kubernetes, read the Event API reference.

## Real-World value

Consider a production environment with tens of microservices where the users report intermittent transaction failures:

Traditional event aggregation process: Engineers are wasting hours sifting through thousands of standalone events spread across namespaces. By the time they look into it, the older events have long since purged, and correlating pod restarts to node-level issues is practically impossible.

With its event aggregation in its custom events: The system groups events across resources, instantly surfacing correlation patterns such as volume mount timeouts before pod restarts. History indicates it occurred during past record traffic spikes, highlighting a storage scalability issue in minutes rather than hours.

The beneficy of this approach is that organizations that implement it commonly cut down their troubleshooting time significantly along with increasing the reliability of systems by detecting patterns early.

## Building an Event aggregation system

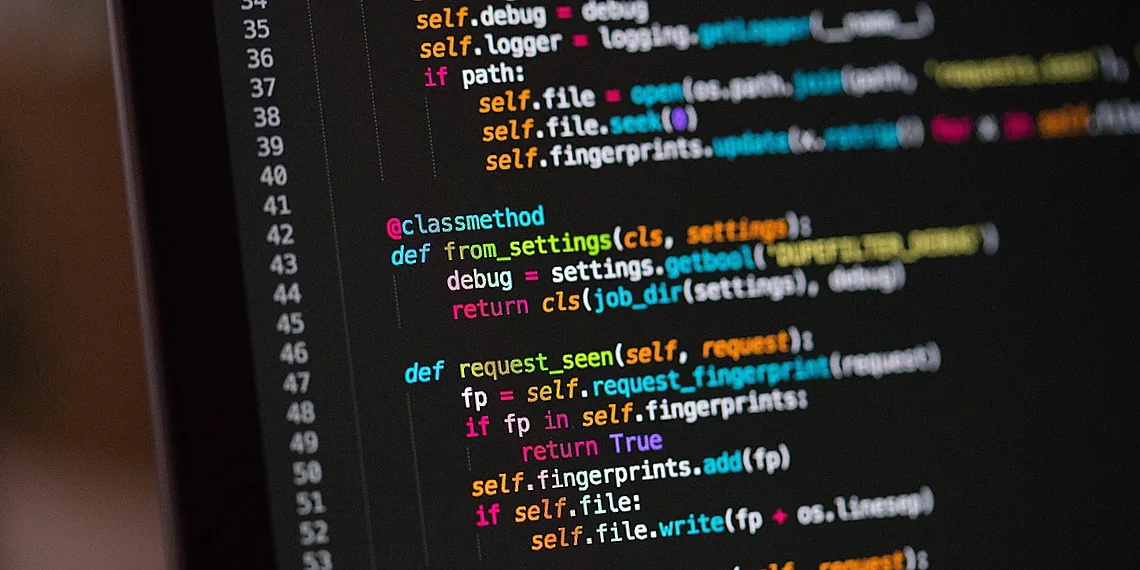

This post explores how to build a custom event aggregation system that addresses these challenges, aligned to Kubernetes best practices. I’ve picked the Go programming language for my example.

### Architecture overview

This event aggregation system consists of three main components:

- Event Watcher: Monitors the Kubernetes API for new events

- Event Processor: Processes, categorizes, and correlates events

- Storage Backend: Stores processed events for longer retention

Here’s a sketch for how to implement the event watcher:

package main

import ("context")

metav1 "k8s.io/apimachinery/pkg/apis/meta/v1"

eventsv1 "k8s.io/api/events/v1")

type EventWatcher struct {

Clientset *kubernetes.Clientset

}

func NewEventWatcher(config *rest.Config) (*EventWatcher, error) {

clientset, err := kubernetes.NewForConfig(config)

if err != nil {

return nil, err

}

return &EventWatcher{clientset: clientset},

}

func (w *EventWatcher) Watch(ctx context.Context) (<-chan *

eventsv1.Event, error) {

events := make(chan *

eventsv1.Event)

watcher, err := w.clientset.EventsV1().Events("").Watch(ctx, metav1.ListOptions{})

if err != nil {

return nil, err

}

go func() {

defer close(events)

for {

var event eventsv1.Event

if e, ok := event.Object.(\*eventsv1.Event); ok {

events <- e

}

time.Sleep(1 * time.Second) // Adjust sleep duration as needed

}

}()

return events, nil

}

Other images (JSON array of candidates):

[

{“src_url”: “https://pixabay.com/get/g2199c60b011fe82892a91ca9019de95f556caea6e4d86704e6d683a25dec0cf51c0e8e1ex4.jpg“, “local_path”: “D:\Python Apps\ByteTrending\article_images\20250814-243\20250814-243_0.jpg”, “source”: “pixabay”, “score”: 8.0, “reason”: “The image of ‘Home Automation’ tiles directly relates to the topic of Kubernetes events – managing and monitoring applications within a cluster. The visual representation of control and automation aligns well with the core concept.”},

{“src_url”: “https://pixabay.com/get/ge53fdd515d46773b4be8cfcc6615b50b8041fd1d084177b881d62707a5ddb0d73bbfcaa15489becd8bb9ac8223c4fc698a01e2e52f9157553b152314a6acaa75_1280.jpg“, “local_path”: “D:\Python Apps\ByteTrending\article_images\20250814-243\20250814-243_3.jpg”, “source”: “pixabay”, “score”: 7.0, “reason”: “The ‘Security’ screen with a finger pressing the button symbolizes security measures and alerts – aligning with monitoring and responding to issues within a Kubernetes environment. It’s a good visual metaphor.”},

{“src_url”: “https://pixabay.com/get/g602fdc7091b288c9e4c622f70569c9bbf5c482b03415ab00d68ecb174a15079bc90aca1340860fdebce44866efaeac0905b7196e0d6532ee5e1a7835533ce78b_1280.jpg“, “local_path”: “D:\Python Apps\ByteTrending\article_images\20250814-243\20250814-243_1.jpg”, “source”: “pixabay”, “score”: 6.0, “reason”: “The image of red berries is somewhat abstract and doesn’t directly relate to Kubernetes events or monitoring. It’s visually appealing but lacks relevance to the topic.”},

{“src_url”: “https://pixabay.com/get/gf4d6cc7fff9c7162354112ec52f1316f3bad22069e214d0ab154831705e81db44c4ec800c187077a1f8cfe75cb99704953b6706dd90b038427f5eec1ec687266_1280.jpg“, “local_path”: “D:\Python Apps\ByteTrending\article_images\20250814-243\20250814-243_4.jpg”, “source”: “pixabay”, “score”: 5.0, “reason”: “The image of stacked metal pipes is visually interesting but doesn’t have any direct connection to the topic of Kubernetes events or system monitoring. It’s too abstract and lacks relevance.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.