Intelligent document processing (IDP) refers to the automated extraction, classification, and processing of data from various document formats—both structured and unstructured. Within the IDP landscape, key information extraction (KIE) serves as a fundamental component, enabling systems to identify and extract critical data points from documents with minimal human intervention. Organizations across diverse sectors—including financial services, healthcare, legal, and supply chain management—are increasingly adopting IDP solutions to streamline operations, reduce manual data entry, and accelerate business processes. As document volumes grow exponentially, IDP solutions not only automate processing but also enable sophisticated agentic workflows—where AI systems can analyze extracted data and initiate appropriate actions with minimal human intervention. The ability to accurately process invoices, contracts, medical records, and regulatory documents has become not just a competitive advantage but a business necessity. Importantly, developing effective IDP solutions requires not only robust extraction capabilities but also tailored evaluation frameworks that align with specific industry needs and individual organizational use cases.

In this blog post, we demonstrate an end-to-end approach for building and evaluating a KIE solution using Amazon Nova models available through Amazon Bedrock. This end-to-end approach encompasses three critical phases: data readiness (understanding and preparing your documents), solution development (implementing extraction logic with appropriate models), and performance measurement (evaluating accuracy, efficiency, and cost-effectiveness). We illustrate this comprehensive approach using the FATURA dataset—a collection of diverse invoice documents that serves as a representative proxy for real-world enterprise data. By working through this practical example, we show you how to select, implement, and evaluate foundation models for document processing tasks while taking into consideration critical factors such as extraction accuracy, processing speed, and operational costs.

Whether you’re a data scientist exploring generative AI capabilities, a developer implementing document processing pipelines, or a business analyst seeking to understand automation possibilities, this guide provides valuable insights for your use case. By the end of this post, you’ll have a practical understanding of how to use large language models for document extraction tasks, establish meaningful evaluation metrics for your specific use case, and make informed decisions about model selection based on both performance and business considerations. These skills can help your organization move beyond manual document handling toward more efficient, accurate, and scalable document processing solutions.

Dataset

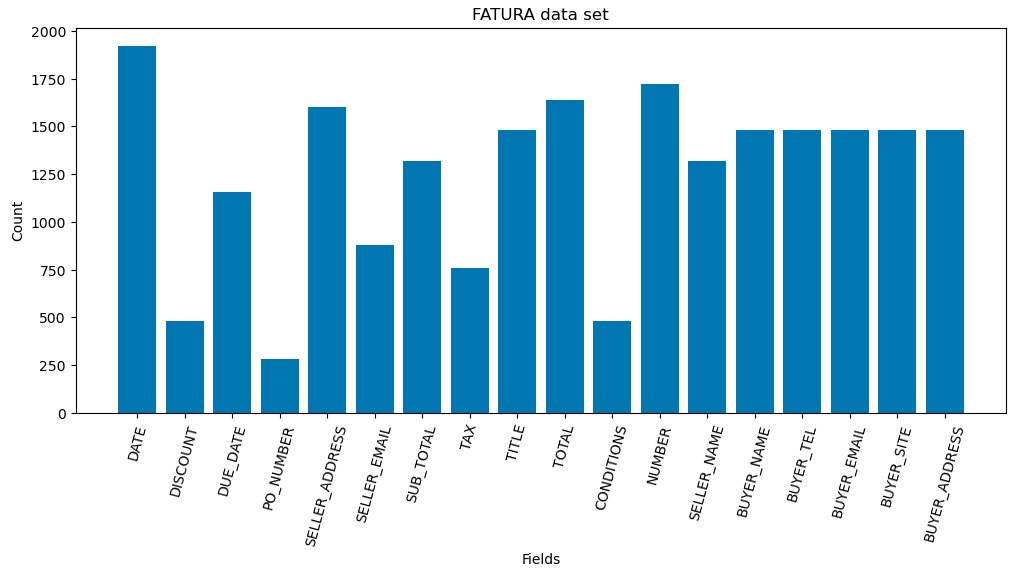

Demonstrating our KIE solution and benchmarking its performance requires a dataset that provides realistic document processing scenarios while offering reliable ground truth for accurate performance measurement. One such dataset is FATURA, which contains 10,000 invoices with 50 distinct layouts (200 invoices per layout). The invoices are all one-page documents stored as JPEG images with annotations of 24 fields per document. High-quality labels are foundational to evaluation tasks, serving as the ground truth against which we measure extraction accuracy. Upon examining the FATURA dataset, we identified several variations in the ground truth labels that required standardization.

Source: Read the original article here.

Discover more tech insights on ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.