Imagine a warehouse where robots effortlessly navigate, picking and placing items without human intervention, or a hospital where assistive devices anticipate patient needs before they’re even voiced – this isn’t science fiction anymore; it’s a glimpse of what advanced robotics promises to deliver.

Currently, however, the reality is often far more constrained. Many robots still struggle with basic tasks, frequently bumping into obstacles or misinterpreting their surroundings because they lack a nuanced understanding of the world around them.

A significant hurdle in achieving this seamless integration lies in improving how robots perceive and understand objects – it’s all about robust and reliable **Robot Object Recognition**.

Researchers at Stanford University have recently unveiled a groundbreaking approach that tackles this challenge head-on, pushing the boundaries of what’s possible and bringing us closer to truly intelligent robotic systems. Their work represents a crucial step towards robots operating with greater autonomy and adaptability in complex environments.

The Current Robotic Challenge

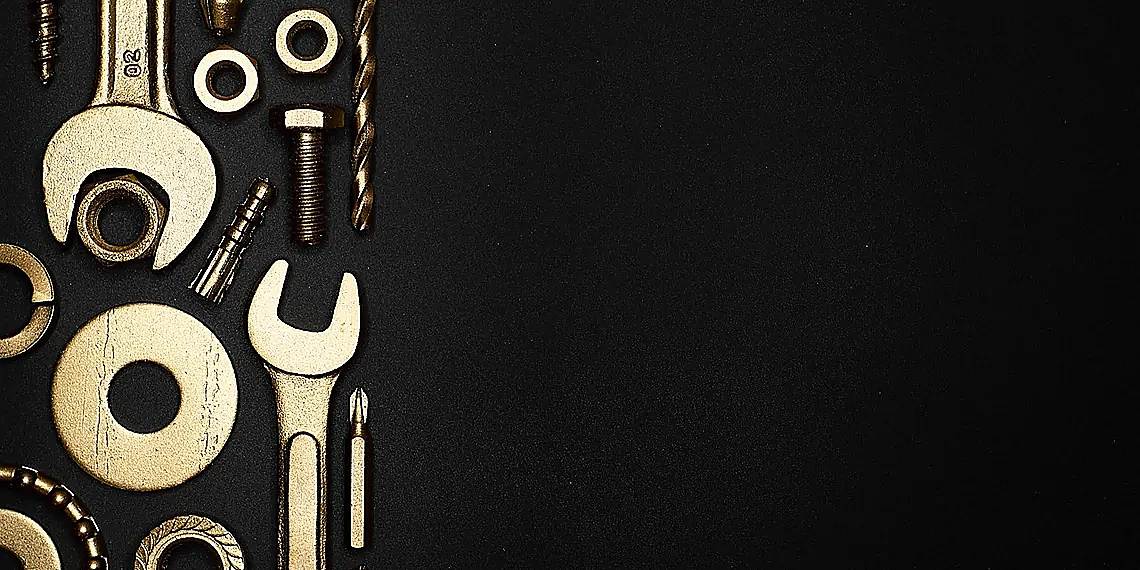

Current robot object recognition capabilities, while impressive in some areas, often fall short of what’s required for genuine autonomy. Many existing systems excel at *identifying* objects: a camera can reliably tell you ‘this is a hammer,’ or ‘that’s a screwdriver.’ However, simply knowing the label isn’t enough. Robots need to understand *what* these tools do – how they function and what tasks they are suited for. This crucial distinction highlights a significant gap in robotic intelligence; current systems largely lack this functional understanding, treating objects as static entities rather than dynamic actors within an environment.

The limitation stems from the fact that most object recognition models are trained on datasets focused primarily on visual identification. They learn to associate specific shapes and colors with pre-defined labels, but rarely receive instruction on how those objects interact with the world or what problems they solve. Imagine a robot tasked with building a birdhouse; it might correctly identify a hammer and nails, but without understanding their *purpose* – hammering nails into wood – it can’t execute the task successfully. This inability to reason about functionality severely restricts robots’ ability to adapt to unexpected situations or perform complex tasks.

This functional gap directly hinders progress towards true robotic autonomy. For robots to operate effectively in unstructured environments, they need to be able to assess a situation, determine which tools are relevant, and then use them appropriately – all without explicit human programming for every conceivable scenario. Reliance on pre-programmed sequences is brittle; a slight deviation from the expected conditions can cause a robot to fail catastrophically. By enabling robots to understand *why* an object exists and how it’s used, we unlock their potential to solve problems creatively and adapt to unforeseen circumstances.

The Stanford research described represents a significant step towards bridging this gap. Their new computer vision model moves beyond simple identification by learning the functional relationships between objects and actions. This shift in focus – from ‘what is it?’ to ‘what does it do?’ – promises to empower robots with a more nuanced understanding of their surroundings, ultimately paving the way for truly autonomous operation and opening up exciting possibilities across various industries.

Beyond Identification: The Functional Gap

Current robotic vision systems excel at identifying objects. A robot can reliably determine that an item is, for example, a hammer or a screwdriver. However, this ‘what’—the mere classification of the object—is only a small piece of the puzzle when it comes to enabling truly intelligent robotic interaction with the world. The critical gap lies in understanding *functionality*: knowing not just that something *is* a hammer, but also what a hammer *does*, namely hitting nails or shaping metal.

The limitation stems from how most object recognition models are trained. They’re primarily focused on visual features – shape, color, texture – to match against pre-existing labels. This creates a system that’s excellent at pattern matching, but lacks the semantic understanding necessary to infer purpose. Imagine trying to build something using only labeled images; you might know what each tool looks like, but wouldn’t inherently understand how they fit together or their intended use.

This functional disconnect hinders robots from performing complex tasks requiring tool selection and utilization. While a robot might identify a wrench, it won’t automatically grasp that it’s needed to loosen a bolt unless explicitly programmed with that specific instruction. The Stanford research aims to bridge this gap by training models not just on object appearance but also on the actions associated with them – moving beyond simple identification towards genuine robotic intelligence.

Why This Matters for Autonomy

Current robotic systems often excel at identifying objects – a table, a chair, a hammer – but frequently falter when it comes to understanding what those objects *do*. Traditional computer vision focuses on classification: determining ‘what’ is present in an image. This limitation severely restricts robots’ ability to operate autonomously; they can identify a wrench, for example, but not understand that a wrench is used to tighten bolts.

The Stanford research addresses this critical gap by developing a model that moves beyond simple object identification and aims to recognize the *function* of objects. Instead of just labeling something as a ‘hammer,’ the AI understands its purpose – striking or fastening – which informs how a robot might interact with it. This functional understanding is vital for robots operating in dynamic, unstructured environments where pre-programmed actions are insufficient.

True robotic autonomy necessitates this level of comprehension. Robots need to adapt to unforeseen circumstances and solve problems creatively, something impossible without the ability to reason about an object’s potential use. By recognizing functionality, robots can choose appropriate tools, plan complex tasks, and ultimately operate with greater independence from human supervision – a key step towards widespread adoption in fields ranging from manufacturing to healthcare.

Stanford’s Breakthrough Model

Stanford researchers have achieved a significant leap forward in robotics, unveiling a new AI model that fundamentally changes how robots perceive and interact with their environment. Unlike traditional robot object recognition systems which simply identify *what* an object is (e.g., ‘hammer’, ‘screwdriver’), this breakthrough allows robots to understand *how* those objects are used – their function. This shift from identification to functional understanding opens the door for truly autonomous robots capable of selecting and employing tools with greater precision and adaptability, moving beyond pre-programmed sequences.

The core innovation lies in what the team calls ‘Functional Representation Learning.’ Imagine a child learning about a hammer not just by seeing it, but by observing someone using it to drive nails. The Stanford model mimics this process. It doesn’t just recognize a hammer; it learns its ‘hammering’ function – the action it’s intended for. This functional representation is encoded as part of the object’s understanding, allowing the robot to reason about how to use the tool effectively. For example, the robot could differentiate between a wrench meant for turning bolts and one used as an impromptu doorstop, based on observed usage patterns.

To achieve this, the researchers trained their model using a massive dataset of real-world videos showing humans interacting with various objects. This data wasn’t simply labeled; it was annotated to highlight the *actions* being performed with each object. By analyzing these interactions, the AI learned to associate visual features with specific functionalities. The resulting model then generates a ‘functional representation’ for each object – essentially a summary of its typical uses. While the underlying technical details involve complex neural networks and reinforcement learning techniques, the key takeaway is that this approach enables robots to go beyond rote memorization and towards more intuitive problem-solving.

The potential implications are vast. From automated manufacturing and construction to assisting individuals with disabilities and exploring hazardous environments, robots equipped with functional object recognition promise a new era of intelligent automation. This Stanford model represents a crucial step toward creating robots that aren’t just programmed to perform tasks, but can intelligently adapt and utilize their environment to achieve goals – truly demonstrating the power of AI in expanding robotic capabilities.

How It Works: Functional Representation Learning

Traditional computer vision often focuses on identifying *what* an object is – a chair, a cup, a hammer. The Stanford team’s new model takes this a step further. It aims to learn how objects are used – their ‘function’. Instead of just recognizing a hammer as a ‘hammer’, the AI learns that it’s something you use for hitting nails or breaking things. This functional understanding is crucial for robots needing to interact with the world in meaningful ways, allowing them to not only see an object but also understand its purpose and potential actions.

The key innovation lies in what researchers call ‘functional representation learning’. Imagine teaching a child about tools; you don’t just show them pictures of hammers – you demonstrate how they’re used. Similarly, this model is trained on videos demonstrating objects being manipulated. It analyzes these sequences to extract patterns and relationships between the object itself and the actions performed upon it. This process allows the AI to build an internal ‘understanding’ of what a tool *does*, rather than merely its visual appearance.

By learning these functional representations, robots can better anticipate how to interact with objects in new situations. For example, if faced with an unfamiliar object, the robot might be able to infer its likely function based on similarities to known tools – perhaps recognizing that a strange lever is used for lifting or moving something. This ability to generalize and reason about tool use represents a significant leap forward in robotics, potentially enabling more adaptable and intelligent autonomous systems.

Training Data and Methodology

The Stanford team’s robot object recognition model was trained on a massive dataset called ‘Object Relations Dataset (ORD).’ This dataset comprises over 20,000 videos depicting humans interacting with various objects in everyday scenarios. Crucially, ORD goes beyond simply identifying *what* an object is; it captures the actions people perform with those objects – for example, using a hammer to hit a nail or a spoon to stir soup. This focus on observed human behavior provides critical context for understanding potential functionality.

A key technique employed during training was contrastive learning. This approach encourages the model to group together images and videos showing similar actions performed with different objects (e.g., using a spatula versus a turner). By identifying these functional similarities, even if the visual appearance of the tools varies considerably, the model learns to infer possible uses beyond just recognizing the object’s label. This is in contrast to traditional object recognition models which primarily focus on classification.

Furthermore, the researchers incorporated a ‘relational reasoning’ component into their architecture. This allows the model to understand how objects relate to each other within a scene and predict actions based on these relationships – for example, recognizing that a screwdriver is likely used in conjunction with a screw.

Impact and Potential Applications

The implications of this new AI-powered robot object recognition model extend far beyond simply identifying a ‘hammer’ versus a ‘screwdriver.’ It’s about understanding *what* those tools do and how they can be utilized – fundamentally changing how robots interact with their environment. This shift opens doors to significantly more adaptable and intelligent robotic systems, moving them away from pre-programmed sequences towards autonomous problem-solving capabilities. Imagine a robot not just picking up an object, but understanding it’s a wrench needed for a specific repair task and then executing that task without explicit human instruction – this is the potential we’re beginning to see realized.

In manufacturing and logistics, the impact could be transformative. Current automated assembly lines rely on rigid programming; improved robot object recognition allows for greater flexibility in handling variations in parts or adapting to unexpected changes on the production floor. Warehouses could become far more efficient with robots capable of identifying and retrieving specific items based on their function rather than just a barcode scan, drastically reducing errors and speeding up fulfillment processes. This adaptability also extends to complex tasks like palletizing and depalletizing, where robots can intelligently arrange goods for optimal space utilization.

Beyond industrial applications, the technology holds immense promise in healthcare and assistive technologies. Surgical robotics could benefit from enhanced object recognition allowing for more precise instrument handling and improved surgical outcomes. For elderly or disabled individuals, assistive robots equipped with this capability could perform tasks like fetching medication, preparing meals, or even providing mobility assistance – offering a greater degree of independence and improving quality of life. The ability to understand the function of objects allows these robots to anticipate needs and proactively offer support.

Finally, consider the crucial role this technology can play in search & rescue operations. Deploying robots into dangerous environments, such as collapsed buildings or disaster zones, is often necessary when human intervention is too risky. With improved object recognition, these robots could autonomously identify hazards (e.g., unstable debris), locate survivors by recognizing essential items like blankets or water bottles, and even clear pathways for rescue teams – significantly increasing the chances of successful recovery efforts in high-stakes situations.

Manufacturing & Logistics

The advancements in robot object recognition, exemplified by recent research like Stanford’s new model, are poised to dramatically reshape manufacturing and logistics operations. Currently, automated assembly lines often rely on rigid programming and pre-defined environments. Improved object recognition allows robots to adapt to variations in parts, unexpected obstructions, and even changes in the production sequence without human intervention – significantly boosting efficiency and reducing downtime.

Warehouse management stands to benefit immensely as well. Robots equipped with sophisticated object recognition can autonomously sort packages, locate items within dense storage areas, and optimize picking routes with far greater accuracy than current systems. This leads to faster order fulfillment, reduced labor costs, and improved inventory control across the entire supply chain. The ability to identify not just *what* an item is but also *how* it should be handled (fragile, heavy, etc.) is a crucial element of this transformation.

Beyond these core applications, enhanced robot object recognition unlocks potential in more specialized industrial settings like automotive assembly and electronics manufacturing. Robots can perform increasingly complex tasks requiring fine motor skills and contextual understanding – for example, identifying the correct screw size or precisely placing components without relying solely on pre-programmed coordinates. This level of adaptability is crucial for addressing the growing demand for customized products and flexible production lines.

Healthcare & Assistance

The advancements in robot object recognition, exemplified by Stanford’s recent work, hold significant promise for revolutionizing healthcare. Surgical robotics, already demonstrating precision and minimally invasive capabilities, could benefit immensely from improved contextual understanding. Imagine a surgical robot not only capable of performing intricate procedures but also able to identify and utilize specialized tools based on the specific tissue or anatomical structure it encounters – all autonomously. This level of adaptability would require robust object recognition combined with an understanding of tool function, which this new AI model helps facilitate.

Beyond surgery, assistive technologies for elderly individuals and those with disabilities stand to gain substantially. Robots equipped with sophisticated object recognition could assist with daily tasks such as medication management (identifying pills), meal preparation (recognizing ingredients and utensils), or even providing mobility assistance by navigating environments while understanding the presence of obstacles and necessary support structures. This moves beyond simple navigation towards proactive, helpful interaction.

Ultimately, enhanced robot object recognition contributes to safer and more reliable robotic systems in healthcare settings. Reduced reliance on human intervention translates to decreased risk for patients and improved efficiency for medical professionals. While challenges remain regarding real-world deployment and ethical considerations surrounding autonomous decision-making by robots, the potential benefits are undeniable and represent a major step forward in assistive robotics.

Search & Rescue

The ability for robots to reliably identify objects and understand their function – what’s being termed ‘Robot Object Recognition’ – has significant implications for search and rescue operations. Currently, robots deployed in disaster zones like collapsed buildings or hazardous material sites often rely on pre-programmed instructions and limited sensor data. This restricts their effectiveness; they may struggle to navigate complex environments or adapt to unexpected obstacles.

With advancements like the Stanford model described, robots could autonomously identify crucial items within a disaster scene – tools for clearing debris, medical supplies for treating victims, or even structural elements indicating stability. Imagine a robot recognizing a crowbar and understanding its purpose for moving rubble, or identifying a first-aid kit and prioritizing its retrieval. This reduces reliance on remote human operators who may face communication challenges or limited visibility.

Furthermore, the technology could facilitate swarming behavior among rescue robots. Each robot, equipped with object recognition capabilities, can contribute to a collective understanding of the environment, sharing information about identified objects and collaboratively planning the most efficient course of action – ultimately accelerating rescue efforts and potentially saving lives in dangerous situations.

Challenges & Future Directions

While the Stanford team’s breakthrough in robot object recognition represents a significant leap forward, current models still face considerable limitations regarding generalization and robustness. The model’s performance is heavily reliant on the training data it receives; encountering an unfamiliar environment—a dimly lit workshop, a cluttered kitchen, or even slightly different tool variations—can drastically reduce accuracy. Occlusion, where objects are partially hidden from view, also poses a challenge. Future research must prioritize expanding datasets to encompass a wider range of lighting conditions, viewpoints, and object appearances. Incorporating techniques like domain adaptation and synthetic data generation could help bridge this gap and enable more reliable performance across diverse real-world scenarios.

The current model excels at identifying tools and their primary functions, but truly intelligent robotic interaction requires understanding far more nuanced relationships between objects and the world. Imagine a robot not only recognizing a hammer but also comprehending its role in striking a nail to secure two pieces of wood – or anticipating that a screwdriver might be needed after loosening a screw. This necessitates moving beyond simple object recognition towards reasoning about causal relationships, affordances (what an object *can* do), and the intentions behind actions. Developing models capable of predicting the consequences of their interactions will be crucial for enabling robots to perform complex tasks safely and effectively.

Looking ahead, research should explore integrating this robot object recognition capability with other robotic skills such as planning and manipulation. Combining visual understanding with the ability to generate action sequences based on those understandings is a vital next step. Furthermore, incorporating human feedback – allowing humans to correct or refine the robot’s interpretations – could accelerate learning and improve performance in complex scenarios where data is scarce. This iterative process of observation, interaction, and refinement will be key to unlocking the full potential of AI-powered robots.

Ultimately, expanding beyond tool recognition opens exciting possibilities for collaborative robotics. Imagine a robot understanding not just *what* someone is trying to do but *how* they are doing it, anticipating their needs, and providing assistance proactively. This requires developing models that can interpret human gestures, facial expressions, and speech – effectively creating robots that are truly intuitive partners in both domestic and industrial settings.

Generalization and Robustness

While the Stanford team’s work represents a significant advancement in robot object recognition, current models still struggle with generalization – their ability to accurately identify objects outside of carefully controlled training environments. A key limitation lies in sensitivity to variations in lighting conditions, partial occlusion (objects being blocked by others), and changes in perspective or viewpoint. For example, a robot trained to recognize a hammer under bright studio lights might fail when encountering the same hammer in dim light or partially hidden behind another tool.

The need for improved robustness is crucial for deploying robots into real-world scenarios. Environments are inherently unpredictable; variations in object appearance due to wear and tear, damage, or even manufacturing differences can easily confuse current models. Furthermore, datasets used for training often lack the diversity necessary to encompass all possible conditions a robot might encounter, leading to performance degradation when faced with novel situations.

Future research should focus on techniques like domain adaptation and few-shot learning to address these challenges. Domain adaptation aims to bridge the gap between training data (often synthetic or curated) and real-world environments. Few-shot learning seeks to enable robots to recognize new objects with only a handful of examples, mimicking human learning capabilities. Incorporating simulation environments that accurately model diverse real-world conditions and actively seeking out edge cases during training will also be essential for building truly robust robot object recognition systems.

Beyond Tools: Understanding Complex Interactions

While the Stanford team’s work represents a significant leap forward in robot object recognition, it primarily focuses on identifying objects and their basic functions – like recognizing a hammer as something used to hit nails. Current models struggle with understanding more nuanced interactions. For instance, they might identify a cup but not understand that it’s being *used* to drink from or is part of a complex sequence involving pouring water and adding sugar. Future research must move beyond static object identification to incorporate contextual awareness and temporal reasoning.

A key area for improvement lies in enabling robots to predict the likely outcome of actions. Instead of simply recognizing a knife, a future system should anticipate that cutting with it will result in smaller pieces. This requires integrating physical simulation capabilities – allowing the robot to virtually ‘test’ potential actions before executing them in the real world. Combining visual input with knowledge about physics and common sense reasoning is crucial for robust interaction understanding.

Ultimately, achieving truly intelligent robotic assistance necessitates incorporating social intelligence. Robots need to understand human intent, recognize non-verbal cues (like pointing or gesturing), and adapt their behavior accordingly. This could involve integrating models of human psychology and developing techniques for learning from demonstration – where robots observe humans performing tasks and learn the underlying strategies and goals.

Stanford’s recent breakthroughs in visual understanding for robotics represent a monumental leap forward, solidifying the trajectory toward truly intelligent and adaptable machines.

The ability to move beyond pre-programmed responses and genuinely interpret the world around them unlocks incredible potential across industries, from logistics and manufacturing to healthcare and exploration.

Imagine robots seamlessly navigating complex environments, not just following instructions but reacting intelligently to unexpected changes – that’s the promise being realized through advancements in areas like Robot Object Recognition.

This isn’t simply about building faster or stronger machines; it’s about imbuing them with a level of cognitive ability that allows for collaboration and problem-solving alongside humans, increasing efficiency and safety significantly. The implications extend far beyond what we can currently envision, shaping how we interact with technology in the years to come. We’re entering an era where robots are not just tools but partners, capable of learning and adapting in real time. The research underscores a critical shift – from reactive automation to proactive assistance – that will redefine many facets of our lives. Further refinement and broader implementation of these techniques promise even more transformative outcomes for the future of robotic systems worldwide. It’s an exciting moment to witness this evolution unfold, with ongoing developments constantly pushing the boundaries of what’s possible. The potential for innovation is virtually limitless as we continue to integrate advanced AI into robotics applications.

Source: Read the original article here.

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.