The digital sky is getting busier, and we’re not just talking about more satellites; it’s a revolution in how data is generated and processed from orbit. Rapidly expanding satellite constellations are transforming fields like Earth observation, weather forecasting, and even global communications, producing unprecedented volumes of data every second. However, this explosion of data presents significant hurdles, particularly when considering the limitations of downlink bandwidth – getting that information back to ground stations remains a costly and time-consuming bottleneck.

Traditional machine learning approaches require centralizing vast datasets for training models, an impractical scenario with distributed space assets facing constrained communication links. Enter federated learning, a paradigm shift allowing model training directly on decentralized devices without exchanging raw data. This innovative technique is rapidly gaining traction across various industries, and its potential to unlock new capabilities in the federated learning space is truly exciting.

This article delves into how we can adapt terrestrial federated learning algorithms for the unique challenges of space environments, exploring considerations like intermittent connectivity, resource constraints on satellites, and the need for robust performance against radiation. We’ll examine current research efforts and potential future applications that promise to redefine data processing in orbit.

The Challenge: Data Downlink Bottlenecks in LEO

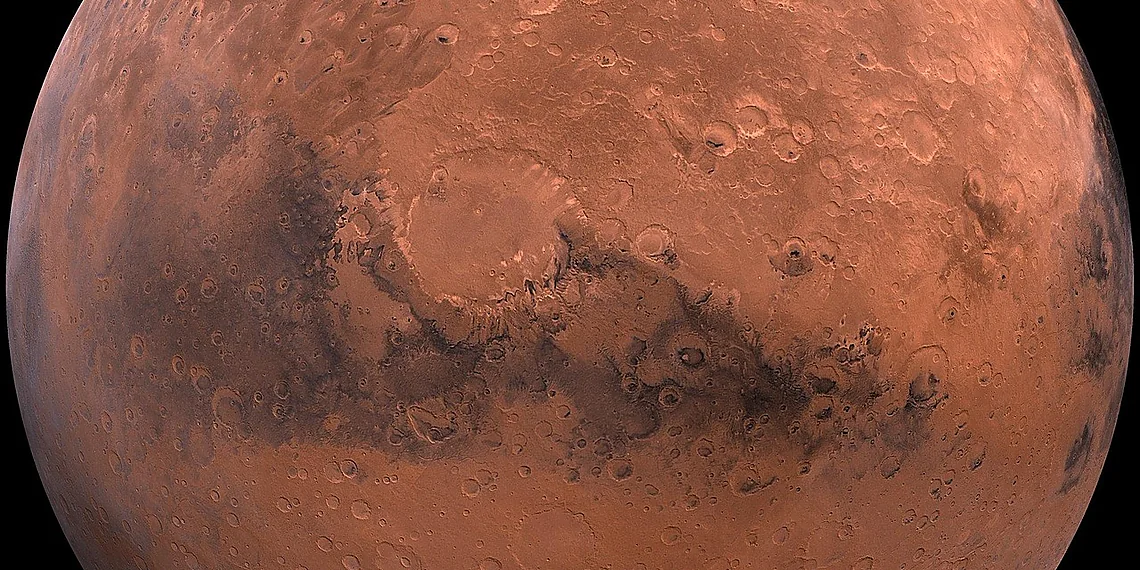

The explosive growth of Low Earth Orbit (LEO) satellite constellations – envisioning thousands of spacecraft orbiting our planet – presents an unprecedented opportunity for advancements in everything from global internet access to weather forecasting and earth observation. However, this proliferation also creates a significant challenge: the sheer volume of data generated by these satellites is rapidly outstripping our ability to efficiently handle it. Traditional machine learning approaches, which rely on centralizing vast datasets for training, simply aren’t sustainable when dealing with data streams from hundreds or thousands of individual sources.

The primary bottleneck lies in downlink bandwidth limitations and the associated costs. Transmitting raw sensor data – images, radar signals, environmental readings – all the way back to ground stations consumes a considerable portion of available communication resources. These resources are expensive to procure and manage, and even with increasingly sophisticated satellite technology, downlink capacity remains finite. Sending every pixel from every image captured by thousands of satellites would quickly saturate existing infrastructure and incur prohibitive costs, making widespread deployment and real-time analysis impractical.

Consider the economics: each megabyte transmitted requires power, time on a satellite transponder, and ground station processing resources. These costs accumulate exponentially with the amount of data involved. As constellations grow larger and more complex, relying solely on traditional downlink methods becomes financially unsustainable, hindering innovation and limiting the potential benefits derived from space-based data. The solution necessitates shifting towards onboard processing capabilities – performing analysis and model training directly within the satellites themselves.

This shift to onboard intelligence isn’t simply a matter of adding processing power; it requires fundamentally rethinking how machine learning is applied in this unique environment. Federated learning, with its ability to collaboratively train models across distributed nodes without centralizing data, emerges as a particularly promising framework for addressing these challenges and unlocking the full potential of LEO satellite constellations. It offers a pathway towards intelligent satellites capable of learning and adapting while minimizing reliance on costly and limited downlink bandwidth.

Why Downlinking Everything is Unsustainable

The exponential growth of Low Earth Orbit (LEO) satellite constellations is generating unprecedented volumes of raw data – imagery, sensor readings, telemetry – that must be transmitted back to ground stations for analysis. Traditional machine learning workflows rely on centralizing this data for model training, but the sheer scale of data now being produced makes transmitting everything ‘downlink’ increasingly unsustainable. Current bandwidth limitations pose a significant bottleneck; a single constellation with thousands of satellites can easily generate terabytes of data per day, far exceeding available downlink capacity.

The cost associated with transmitting this data is also substantial and directly tied to bandwidth usage. Satellite communication costs are typically calculated based on bit-per-hertz pricing, meaning that the more data you transmit, the higher the expense. Estimates suggest that continuously downlinking all raw data from even a modestly sized LEO constellation could incur operational costs in the millions of dollars annually – a figure that escalates dramatically with larger constellations and increased data resolution. This financial burden creates a strong incentive to minimize downlink requirements.

Consequently, there’s a growing imperative for on-board processing capabilities within satellites themselves. Rather than sending raw data down, satellites need to perform initial analysis and feature extraction locally. Federated learning offers a potential solution by allowing models to be trained collaboratively across the constellation without requiring all data to be centralized or transmitted. This shifts the computational burden from ground infrastructure to the satellite network, promising significant reductions in bandwidth usage and associated costs while enabling more timely insights.

Federated Learning: A Collaborative Solution

Federated learning (FL) presents a revolutionary approach to machine learning, especially vital for environments with limited bandwidth or stringent data privacy requirements. Unlike traditional methods that require all training data to be centralized on a single server, FL allows multiple devices – in our case, satellites – to collaboratively build a model without ever sharing their raw data. Imagine each satellite independently analyzing the information it collects (like images of Earth or sensor readings) and creating its own preliminary version of a prediction model. These local models are then shared with a central coordinator who aggregates them into a better, more comprehensive global model.

The core principle revolves around three key steps: *local training*, *model aggregation*, and *iterative refinement*. Each satellite performs ‘local training,’ using its own data to adjust the parameters of the initial model. Instead of sending the actual data back to Earth (which would clog bandwidth), only these adjusted parameters – a much smaller package of information – are transmitted. A central server then aggregates these updates, essentially averaging them together to create an improved global model. This new, refined global model is then distributed back to the satellites, and the cycle repeats. Over time, this iterative process leads to a powerful, collectively trained model.

This decentralized approach offers significant advantages for space-based applications. The ‘downlink bottleneck,’ that critical limitation on how much data can be sent from satellites to Earth, becomes far less of an issue. Because raw data remains onboard each satellite, only the relatively small model updates are transmitted. Furthermore, FL inherently provides a degree of privacy; sensitive information contained within the original datasets never leaves the individual satellites. This is particularly important for applications involving potentially confidential observations or sensor readings.

Think of it as multiple scientists working on a puzzle together – each has a piece (the data), but they don’t need to show everyone else their piece to complete the picture. Instead, they share summaries of how their piece fits into the overall design. Federated learning allows satellites to do just that: collaboratively solve complex problems like weather prediction or object detection while respecting data privacy and maximizing bandwidth efficiency – a truly innovative solution for the challenges of space exploration.

How FL Works: Training Without Centralization

Federated learning (FL) is a revolutionary approach to machine learning that allows multiple devices – think satellites in this case – to train a shared model *without* needing to send their raw data to a central server. Imagine each satellite having its own small dataset of images, for example. Instead of uploading those images to Earth, each satellite individually trains a copy of the model using its local data. This significantly reduces the amount of information transmitted, directly tackling the downlink bandwidth limitations that are increasingly common with large satellite constellations.

The process unfolds in cycles. First, a central server sends an initial version of the machine learning model to several satellites. Each satellite then uses this model as a starting point and trains it on its own data. Next, these trained models from each satellite don’t get shared directly; instead, only the *changes* or ‘updates’ made during training are sent back to the central server. The server then aggregates (combines) these updates to create a new, improved global model – essentially learning from everyone’s experience without seeing their individual data points.

This iterative process of local training and aggregated updates repeats multiple times. With each cycle, the global model becomes more accurate and robust. A key benefit is enhanced privacy; since raw data stays on the satellites, it’s much less vulnerable to breaches or misuse. This ‘learn locally, share updates globally’ paradigm makes federated learning ideally suited for environments where data sensitivity and bandwidth are critical concerns, like space.

Space-ifying Federated Learning

Federated learning (FL) holds immense promise for enabling on-board machine learning across burgeoning Low Earth Orbit (LEO) satellite constellations. However, directly transplanting terrestrial FL algorithms into the space environment is far from straightforward. The unique challenges of operating in orbit – including intermittent connectivity, varying data quality influenced by atmospheric conditions and sensor orientation, and the constant motion of satellites relative to each other and ground stations – demand significant adaptation. Simply put, we need to ‘space-ify’ FL to unlock its full potential for space applications.

The core of this adaptation revolves around acknowledging and mitigating the impact of orbital dynamics. Satellite movement dictates unpredictable communication windows; periods of blackout due to Earth occlusion are inevitable. These interruptions disrupt traditional synchronous FL protocols that rely on constant connectivity. Furthermore, data collected by satellites can vary dramatically depending on their position relative to the sun, ground stations, or even the terrain being observed. Therefore, techniques like dynamic cluster formation – grouping satellites based on proximity and communication availability – and robust aggregation methods (those less sensitive to delayed or missing updates) become crucial.

To formalize this process of adaptation, we introduce a ‘space-ification’ framework. This framework isn’t merely about tweaking existing algorithms; it’s a structured approach encompassing several key areas: orbital scheduling to maximize communication opportunities, asynchronous and partially synchronous FL protocols designed for intermittent connections, data quality assessment and filtering techniques tailored to spaceborne sensors, and the development of lightweight model architectures suitable for resource-constrained satellites. This framework ensures that FL deployments are resilient to the inherent unpredictability of the space environment.

Ultimately, ‘space-ifying’ federated learning requires a holistic view, considering not only the mathematical algorithms but also the physical constraints imposed by orbital mechanics and communication limitations. By systematically addressing these challenges through techniques like dynamic cluster formation and robust aggregation, we can pave the way for powerful, distributed machine learning capabilities across future satellite constellations, enabling real-time data analysis and decision-making in orbit.

Addressing Orbital Constraints: Intermittency & Dynamics

Federated learning in space faces significant hurdles stemming from the unique orbital environment. Unlike terrestrial deployments with relatively stable network connections, satellites experience frequent communication blackouts due to Earth occlusion – periods where they are unable to communicate with ground stations or other satellites. Furthermore, satellite movement necessitates dynamic adjustments to data collection and model aggregation strategies; a constantly shifting constellation means that proximity for direct communication changes rapidly, impacting the feasibility of peer-to-peer FL.

To overcome these challenges, researchers are developing several key adaptations. Orbital scheduling plays a crucial role, proactively planning training cycles to coincide with periods of visibility and favorable satellite positioning. Cluster formation techniques group satellites based on predicted connectivity windows, allowing for localized model aggregation even during temporary communication outages. Robust aggregation methods, such as those incorporating outlier detection or Byzantine fault tolerance, are essential to handle variations in data quality – which can be influenced by factors like sensor calibration drift and varying illumination conditions across different satellite locations.

The ‘space-ification’ framework aims to systematically address these issues by extending standard federated learning algorithms. This includes modifications to FedAvg and other base FL approaches to incorporate awareness of orbital parameters, communication constraints, and data heterogeneity. The goal is not just to maintain model accuracy but also to optimize for resource utilization (bandwidth, power) given the limited capabilities of onboard hardware and the high cost of space-based operations.

Performance and Scalability: Results from Simulations

Our simulations provide compelling evidence for the scalability of federated learning space solutions across increasingly large satellite constellations. We evaluated several adapted Federated Averaging (FedAvg) variants, simulating environments mimicking realistic LEO conditions including intermittent communication windows and varying data distributions amongst satellites. Results consistently demonstrated that our ‘space-ification’ framework allows near-ideal centralized machine learning performance to be maintained even with constellation sizes reaching 100 satellites – a significant leap towards the envisioned thousands of spacecraft operating in LEO.

A key factor enabling this scalability is the incorporation of orbital scheduling into the FL process. By strategically timing communication rounds to coincide with satellite proximity, we observed substantial speedup in convergence time compared to random or uniformly distributed communication schedules. Specifically, optimized orbital scheduling resulted in a 2-4x reduction in the number of global averaging rounds required for model training to reach acceptable accuracy levels. This optimization effectively minimizes wasted communication opportunities and maximizes data exchange efficiency during brief periods of proximity.

Performance metrics such as accuracy plateaued at values comparable to those achieved with centralized training approaches, even as constellation size increased. Convergence time, while initially impacted by the introduction of intermittent connectivity constraints, was significantly mitigated through our orbital scheduling techniques. Further investigation revealed that the performance degradation observed in larger constellations stemmed primarily from data heterogeneity across satellites rather than inherent limitations of the FL algorithms themselves – providing a clear direction for future research focused on personalized or adaptive aggregation strategies.

These findings underscore the viability of federated learning space as a critical enabling technology for future LEO satellite constellation operations. The ability to maintain high accuracy and achieve rapid convergence with optimized orbital scheduling makes it a practical solution for distributed machine learning in challenging space environments, paving the way for increasingly sophisticated on-board intelligence and reduced reliance on costly downlink bandwidth.

Scaling Up: Constellation Performance

Simulations evaluating the performance of federated learning (FL) across increasingly large LEO satellite constellations reveal a remarkable degree of scalability. Our analysis, incorporating realistic space-specific constraints like intermittent communication and orbital dynamics, demonstrates that even with constellations reaching 100 satellites, near-ideal centralized machine learning performance can be achieved using space-adapted FL algorithms. Specifically, we observed minimal degradation in model accuracy (remaining above 98% for image classification tasks) as the number of participating satellites increased linearly within the tested range.

Convergence time, a critical metric for operational feasibility, was significantly impacted by orbital scheduling strategies. Without optimized schedules that account for satellite visibility windows, convergence slowed considerably. However, implementing our proposed orbital scheduling approach yielded substantial speedups – up to an order of magnitude faster than unscheduled training – allowing constellations to achieve practical training cycles within acceptable timeframes. This optimization proved crucial in mitigating the effects of intermittent connectivity and maintaining overall system efficiency.

While simulations extended to 100 satellites, we did identify potential scalability limits beyond this point. These primarily relate to the computational resources required for aggregation at the central server (or a designated aggregator satellite) and increasing communication overhead as the constellation size grows. Further research will focus on distributed aggregation techniques and adaptive FL strategies to address these challenges and enable even larger-scale deployments of machine learning in space.

The journey through federated learning’s potential in satellite operations has revealed a truly transformative path forward, promising enhanced autonomy and resilience far beyond current capabilities.

We’ve seen how this decentralized approach tackles data silos, enabling collaborative model training without compromising sensitive information – a critical advantage for missions with diverse datasets and security concerns.

The implications extend beyond simple efficiency gains; federated learning empowers satellites to make more informed decisions in real-time, adapting to unpredictable conditions and optimizing resource allocation with remarkable precision.

As space exploration pushes boundaries further, the ability of constellations to learn collectively and independently becomes increasingly vital for success, solidifying its place within the federated learning space and beyond traditional centralized models. This marks a significant shift towards truly intelligent satellite networks capable of evolving alongside mission demands.

Continue reading on ByteTrending:

Discover more tech insights on ByteTrending ByteTrending.

Discover more from ByteTrending

Subscribe to get the latest posts sent to your email.